Import existing blog posts

This commit is contained in:

@@ -7,7 +7,13 @@ import * as opml from 'opml';

|

|||||||

export default function (eleventyConfig) {

|

export default function (eleventyConfig) {

|

||||||

eleventyConfig.setInputDirectory("src")

|

eleventyConfig.setInputDirectory("src")

|

||||||

eleventyConfig.addPlugin(pugPlugin);

|

eleventyConfig.addPlugin(pugPlugin);

|

||||||

eleventyConfig.addPlugin(eleventyImageTransformPlugin);

|

eleventyConfig.addPlugin(eleventyImageTransformPlugin, {

|

||||||

|

htmlOptions: {

|

||||||

|

imgAttributes: {

|

||||||

|

alt: '',

|

||||||

|

},

|

||||||

|

},

|

||||||

|

});

|

||||||

eleventyConfig.addPlugin(EleventyRenderPlugin);

|

eleventyConfig.addPlugin(EleventyRenderPlugin);

|

||||||

eleventyConfig.addPassthroughCopy("src/assets/**")

|

eleventyConfig.addPassthroughCopy("src/assets/**")

|

||||||

|

|

||||||

@@ -18,7 +24,7 @@ export default function (eleventyConfig) {

|

|||||||

for ( const post of posts ) {

|

for ( const post of posts ) {

|

||||||

const year = post.date.getFullYear()

|

const year = post.date.getFullYear()

|

||||||

if ( !postsByYear[year] ) postsByYear[year] = []

|

if ( !postsByYear[year] ) postsByYear[year] = []

|

||||||

postsByYear[year].push(post)

|

postsByYear[year] = [post, ...postsByYear[year]]

|

||||||

if ( !years.includes(year) ) years.push(year)

|

if ( !years.includes(year) ) years.push(year)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|||||||

@@ -224,6 +224,7 @@ img {

|

|||||||

|

|

||||||

.blog.content-wrapper img {

|

.blog.content-wrapper img {

|

||||||

height: auto !important;

|

height: auto !important;

|

||||||

|

margin-top: 25px;

|

||||||

}

|

}

|

||||||

|

|

||||||

center {

|

center {

|

||||||

|

|||||||

@@ -7,7 +7,7 @@ block blog_content

|

|||||||

|

|

||||||

.recent-posts

|

.recent-posts

|

||||||

ul.plain

|

ul.plain

|

||||||

each post in collections.blog.slice(0, 10)

|

each post in collections.blog.reverse().slice(0, 5)

|

||||||

li

|

li

|

||||||

.secondary #{post.data.date.toISOString().split('T')[0]}

|

.secondary #{post.data.date.toISOString().split('T')[0]}

|

||||||

a.title(href=post.url) #{post.data.title}

|

a.title(href=post.url) #{post.data.title}

|

||||||

|

|||||||

174

src/blog/posts/2015-10-24-kali-usb.md

Normal file

174

src/blog/posts/2015-10-24-kali-usb.md

Normal file

@@ -0,0 +1,174 @@

|

|||||||

|

---

|

||||||

|

layout: blog_post

|

||||||

|

title: Installing Kali Linux from USB

|

||||||

|

slug: Installing-Kali-Linux-from-USB

|

||||||

|

date: 2015-10-24 00:01:00

|

||||||

|

tags: blog

|

||||||

|

permalink: /blog/2015/10/24/Installing-Kali-Linux-from-USB/

|

||||||

|

blogtags:

|

||||||

|

- kali

|

||||||

|

- linux

|

||||||

|

- tutorial

|

||||||

|

---

|

||||||

|

|

||||||

|

Linux. When most people think about Linux, they envision hackers and scrolling lines of code; however, today there are many modern Linux distributions (brands, if you will) that offer modern user interfaces and compatible software. These distributions are designed for the end-user; modern, daily driver operating systems. Kali Linux is not among these.

|

||||||

|

|

||||||

|

To quote the Kali Documentation, “**Kali Linux** is the new generation of the industry-leading BackTrack **Linux** penetration testing and security auditing **Linux** distribution. **Kali Linux** is a complete re-build of BackTrack from the ground up, adhering completely to Debian development standards.”

|

||||||

|

|

||||||

|

Basically, Kali is the ultimate OS for ethical hackers. It contains tools for secure penetration testing. Basically, it is used to test how secure “secure” systems really are. It is a good way to get into the more advanced functions of computers and networks. If this piques your interest, then stick around. In this tutorial I will be documenting how to write the installer to a USB drive and install Kali Linux to the hard drive or a partition (more on that later).

|

||||||

|

|

||||||

|

Side Note: For those of you using VirtualBox or similar, set the Type to Linux and the Version to Oracle (either 64 or 32 bit based on your operating system).

|

||||||

|

|

||||||

|

### Materials Needed:

|

||||||

|

|

||||||

|

* USB Flash Drive (4gb or greater)

|

||||||

|

|

||||||

|

* An Internet Connection

|

||||||

|

|

||||||

|

* Windows or Linux Computer

|

||||||

|

|

||||||

|

* A Bit of Patience

|

||||||

|

|

||||||

|

### Step 1: Downloading Kali

|

||||||

|

|

||||||

|

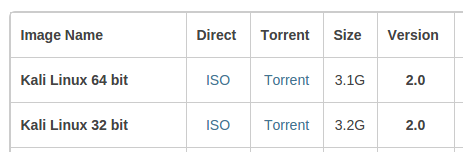

To install Kali, we will boot from an install disc turned USB drive. First, we need the installer ISO. Download this from:

|

||||||

|

[**Kali Linux Downloads**

|

||||||

|

*Download Kali Linux Images We generate fresh Kali Linux image files every few months, which we make available for…*www.kali.org](https://www.kali.org/downloads/)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Be sure to select 64/32 bit based on your system.

|

||||||

|

|

||||||

|

### Step 2a: Formatting the USB Drive (Windows)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

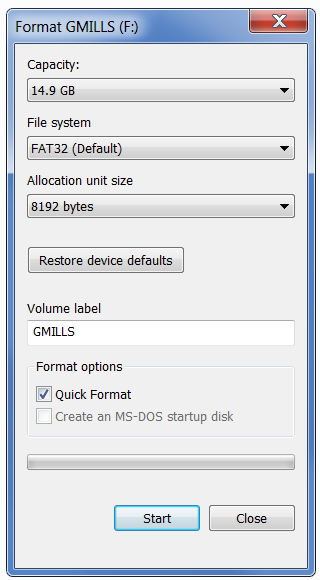

To format the USB drive on a Windows computer, insert the drive and remove any files you want to keep. Right click on the drive and click format. For the file system, select FAT32.

|

||||||

|

|

||||||

|

Quick format is fine, unless the drive was in a different format than FAT or NTFS. Then deselect this option.

|

||||||

|

|

||||||

|

### Step 2b: Formatting the USB Drive (Linux)

|

||||||

|

|

||||||

|

For this, we will be using gparted. If you don’t have it, it is available in the default repos on most distributions. Simply issue the installation command.

|

||||||

|

|

||||||

|

Ubuntu & Derivatives:

|

||||||

|

|

||||||

|

**sudo apt-get install gparted**

|

||||||

|

|

||||||

|

Fedora & Derivatives:

|

||||||

|

|

||||||

|

**sudo yum install gparted**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

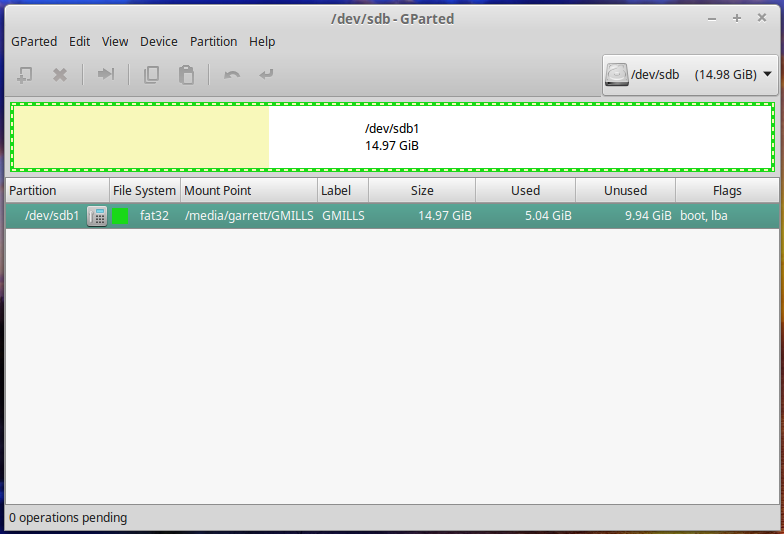

Plug in your drive and open gparted. In the top right corner, and select the /dev/sdb object. Right click the main partition and click unmount.

|

||||||

|

|

||||||

|

Under the device menu, select ‘Create Partition Table’ and approve it. You will then see that the drive has been converted to unallocated space. Right click the space, select new.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

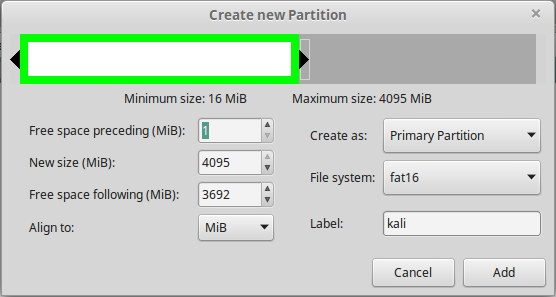

Change the file system type to FAT16. THIS IS IMPORTANT.

|

||||||

|

|

||||||

|

Pick a label and click add. Press Ctrl+Enter to apply the operation.

|

||||||

|

|

||||||

|

### Step 3: Writing the Installer to the USB

|

||||||

|

|

||||||

|

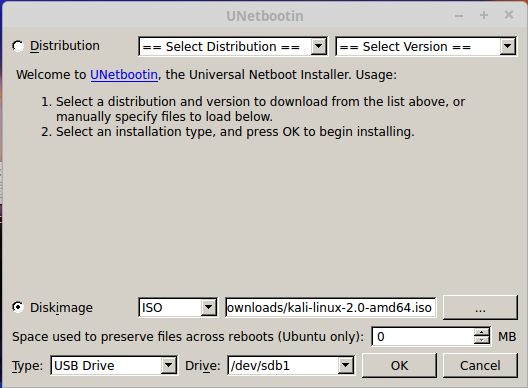

To write the Installation ISO to the USB drive, we will use a program called unetbootin.

|

||||||

|

|

||||||

|

Windows — Download from here:

|

||||||

|

[**UNetbootin**

|

||||||

|

*UNetbootin allows you to create bootable Live USB drives for Ubuntu and other Linux distributions without burning a CD…*unetbootin.github.io](http://unetbootin.github.io/)

|

||||||

|

|

||||||

|

Linux — Available in most repositories.

|

||||||

|

|

||||||

|

Ubuntu & co:

|

||||||

|

|

||||||

|

**sudo apt-get install unetbootin**

|

||||||

|

|

||||||

|

Fedora & co:

|

||||||

|

|

||||||

|

**sudo yum install unetbootin**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Open the program and select your newly formatted USB drive, as well as the downloaded ISO.

|

||||||

|

|

||||||

|

Click OK to begin the process. When it finishes, it will ask you to reboot your computer.

|

||||||

|

|

||||||

|

Now the fun starts.

|

||||||

|

|

||||||

|

### Step 5: Boot

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

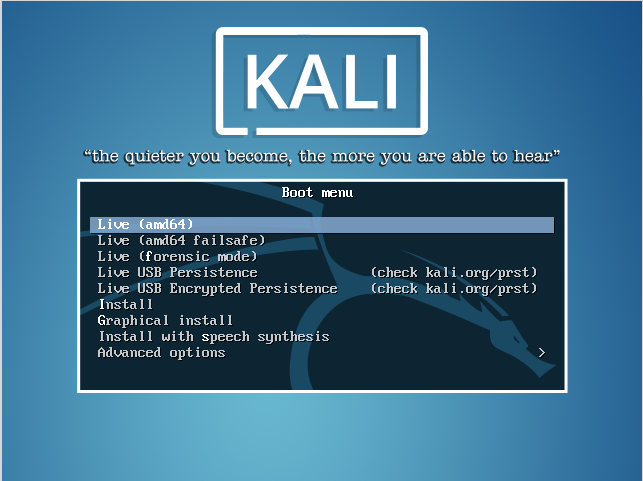

Plug the USB installer into the computer you are installing Kali on. When booting the computer, repeatedly press the trigger key to enter the boot option menu (usually F12), and select the USB drive.

|

||||||

|

|

||||||

|

You will then see the Unetbootin bootloader menu. Select the Live Boot option for Kali Linux.

|

||||||

|

|

||||||

|

### Step 6: Partitioning

|

||||||

|

|

||||||

|

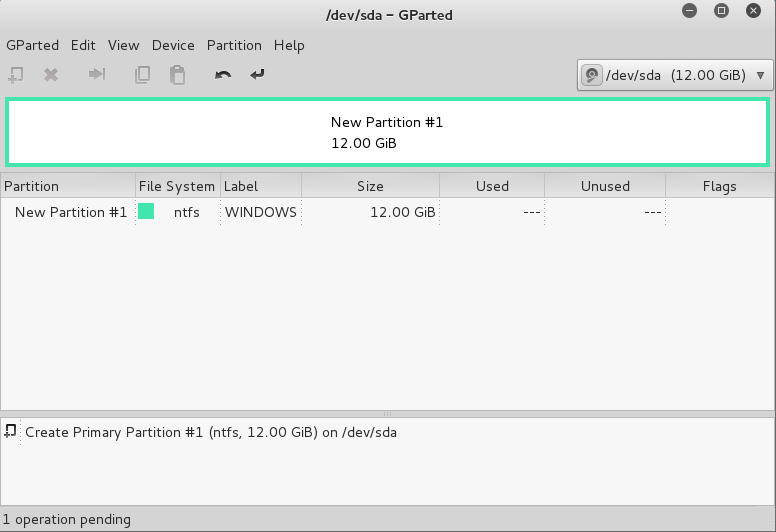

If you plan to dual-boot Kali with another OS, you will need to partition the drive to create space for both operating systems. If you are installing Kali by itself, skip this section. On the GNOME3 toolbar, click Show All Applications, and launch gparted.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

You should get a window looking (more or less) like this (left).

|

||||||

|

|

||||||

|

Right click on the existing partition, and click Resize/Move.

|

||||||

|

|

||||||

|

**NOTE: If you are dual booting Windows with Kali, it is important that you defragment all disks prior to re-sizing the partition, or some files may be lost.**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

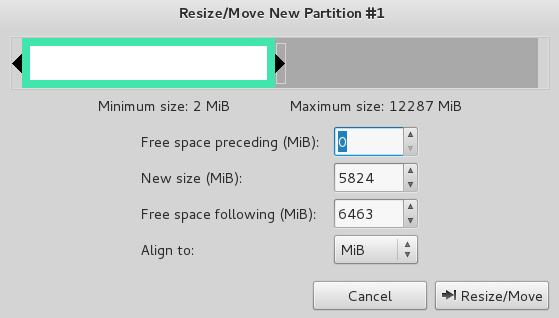

Adjust the partition size to create space for Kali. Kali needs about 10GB for a minimal install with some file storage. Obviously the sizes in this picture (left) are not accurate. Hit resize/move to apply.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

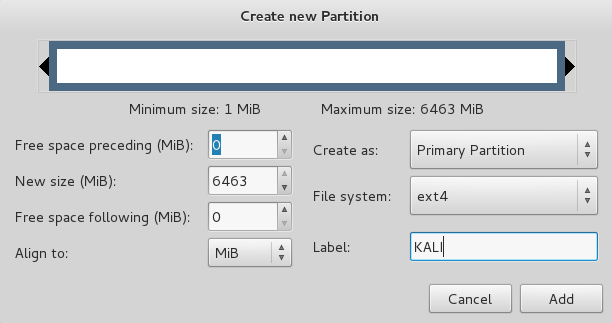

Right click on the new unallocated space, click NEW and create a partition, making sure the Type is ext4.

|

||||||

|

|

||||||

|

Next, press Ctrl+Enter to apply the changes.

|

||||||

|

|

||||||

|

### Step 7: Install

|

||||||

|

|

||||||

|

Next, click show applications in the GNOME3 toolbar, and search for install. Launch the application Install Kali. This will launch a graphical wizard installer.

|

||||||

|

|

||||||

|

Note: If your installer looks like this (left), and you cannot see all of it, do not fret. Simply press the Windows button to open the GNOME menu, then drag the installer from the current workspace to a new one.

|

||||||

|

|

||||||

|

**NOTE: If the Kali installer locks itself due to inactivity, the password is toor (root backwards).**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

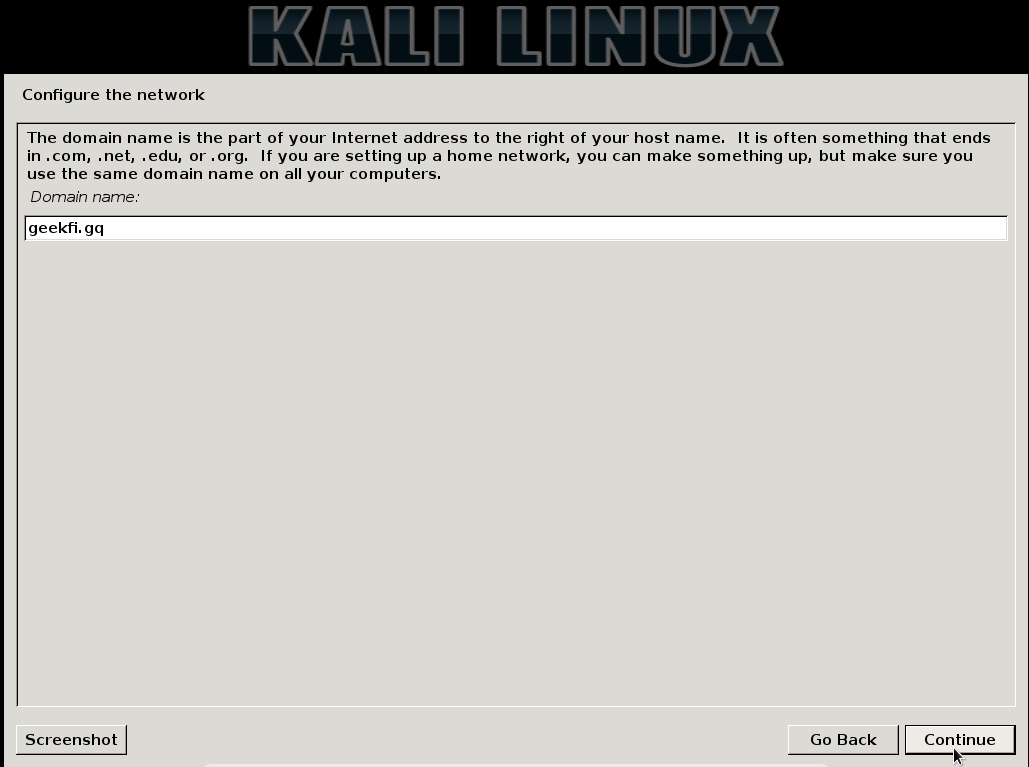

Basically, point and click your way through Location, Language, and Keyboard, and after a bit of loading, you will see a screen asking you to supply a domain name.

|

||||||

|

|

||||||

|

This is the domain name the system will use by default (akin to localhost). If you are confused, or don’t have one, just leave this blank.

|

||||||

|

|

||||||

|

Set a strong root password, and breeze through the time zone, and it is time to configure the disks.

|

||||||

|

|

||||||

|

### Step 4: Partitioning

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

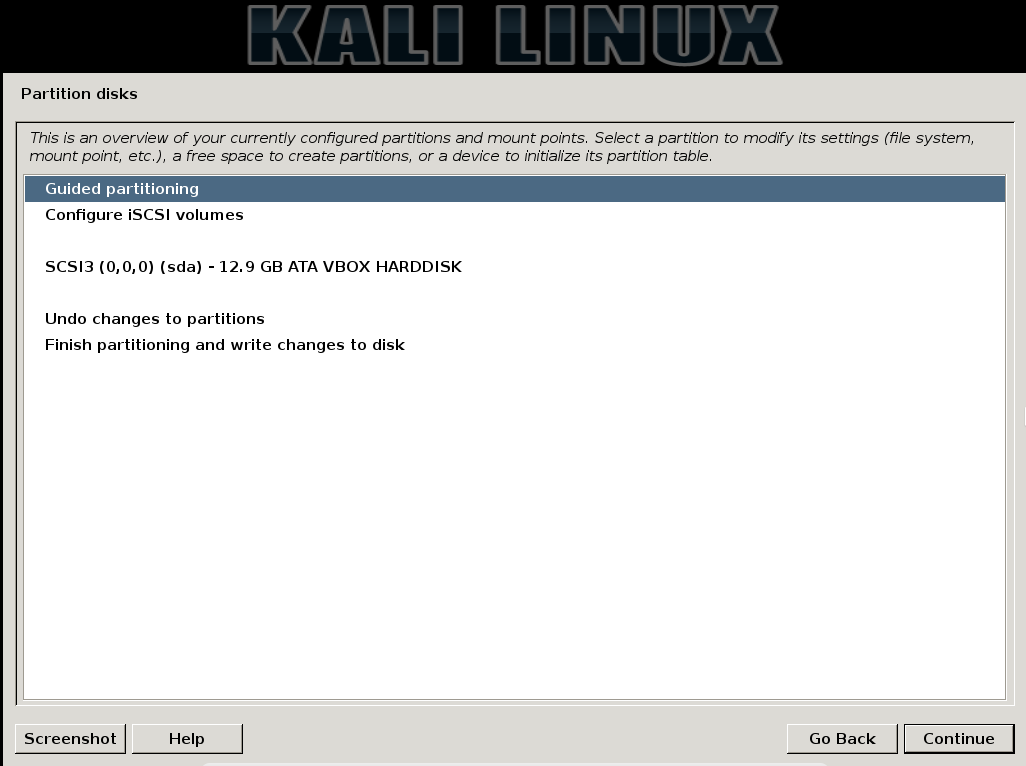

|

At this point you will see a asking you to configure disks. Click manual and then continue and you will see this screen (left).

|

||||||

|

|

||||||

|

The middle section is a list of all recognized storage drives. If you have already configured your partition, skip this next part.

|

||||||

|

|

||||||

|

Click on the partition you created in step 6 and click continue.

|

||||||

|

|

||||||

|

If you reach a screen asking you to create a new partition table, click yes if you are using Kali by itself, no if you are dual-booting then click continue.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

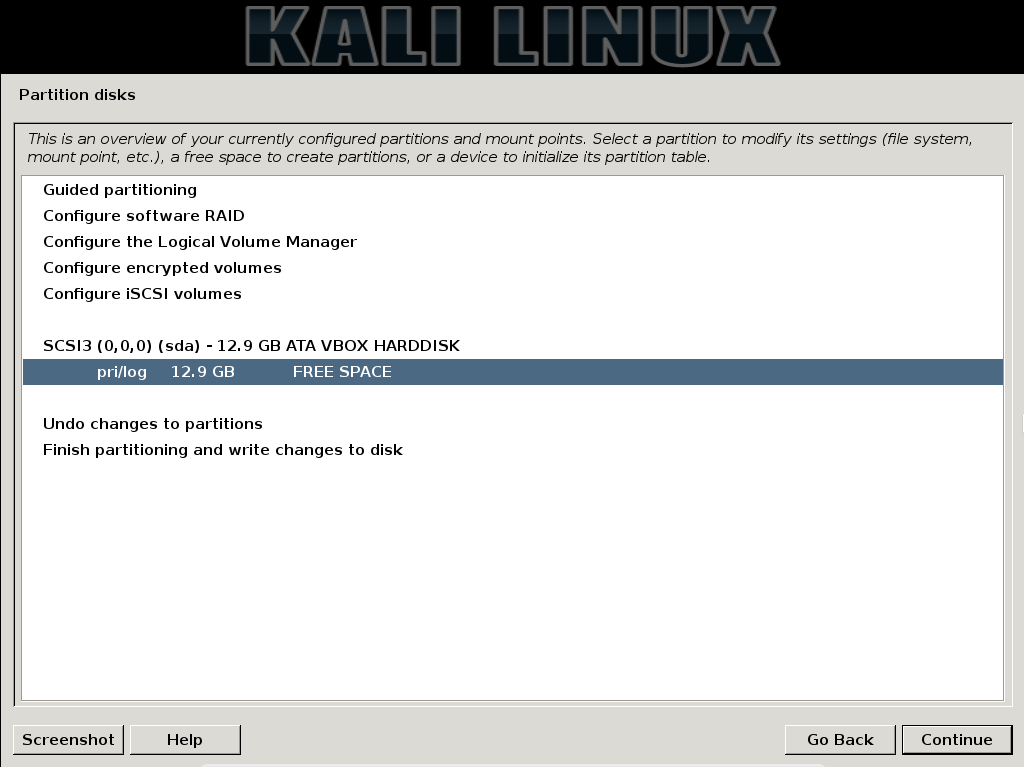

On the next screen, you will see a list of all devices and partitions, including your created partition, or FREE SPACE if you are installing on a single OS computer. Click on the desired partition and click continue.

|

||||||

|

|

||||||

|

If you are asked to create a new partition, simply point and click through the wizard until you see this next screen:

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

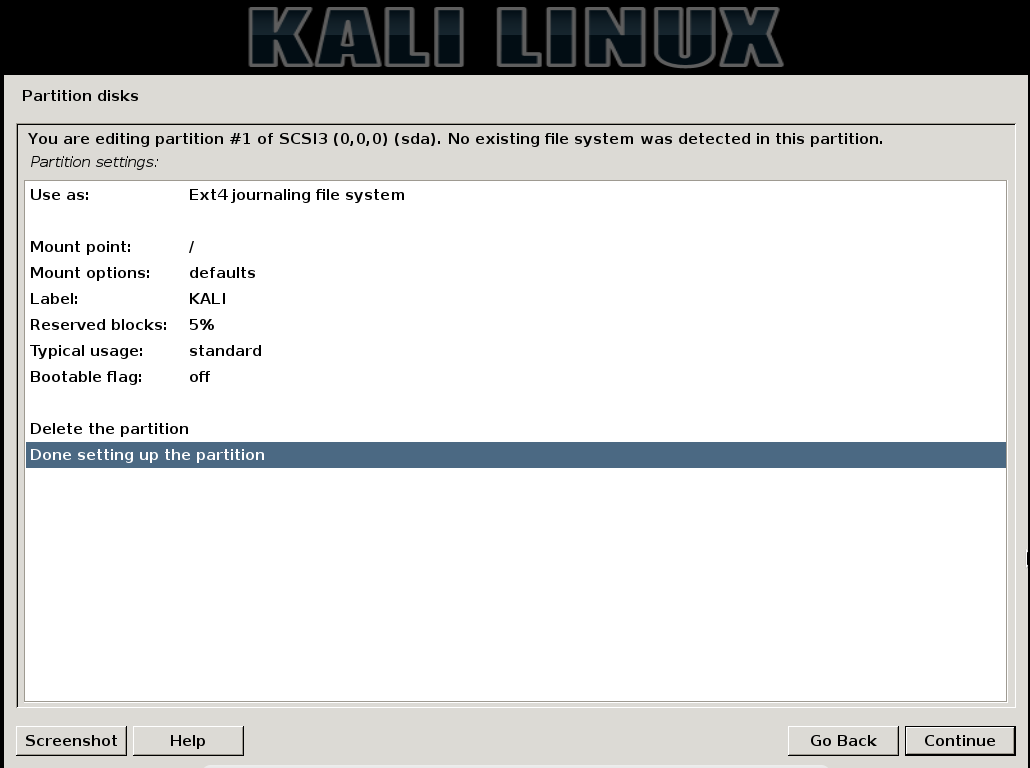

On this screen, make sure it is set to Ext4 journaling file system. Set the mount point to / and label it something. Click “Done setting up the partition” and Continue to move on to the next step.

|

||||||

|

|

||||||

|

This will take you to the previous screen, with your new partition. Verify it is correct, then select “Finish partitioning…” and click Continue.

|

||||||

|

|

||||||

|

You will be prompted with a screen requesting you to make a swap partition. Click NO and continue. On the next screen, it will confirm that the changes be written to the disk. Click YES and Continue. Shortly after this, it will install the system to the HDD. Simply point and click through the remainder of the wizard, and when it is installed, simply reboot. Take care to remove the USB drive first.

|

||||||

|

|

||||||

|

Note — After install, if Kali doesn’t appear in the GRUB bootloader for your current system, open your current Linux system and issue the following command:

|

||||||

|

|

||||||

|

**sudo update-grub**

|

||||||

91

src/blog/posts/2018-02-22-ubuntu-onedrive.md

Normal file

91

src/blog/posts/2018-02-22-ubuntu-onedrive.md

Normal file

@@ -0,0 +1,91 @@

|

|||||||

|

---

|

||||||

|

layout: blog_post

|

||||||

|

title: OneDrive Sync for Linux (Ubuntu)

|

||||||

|

slug: OneDrive-Sync-for-Linux-Ubuntu

|

||||||

|

date: 2018-02-22 00:01:00

|

||||||

|

tags: blog

|

||||||

|

permalink: /blog/2018/02/22/OneDrive-Sync-for-Linux-Ubuntu/

|

||||||

|

blogtags:

|

||||||

|

- onedrive

|

||||||

|

- linux

|

||||||

|

- tutorial

|

||||||

|

---

|

||||||

|

So, a while back I decided to make the switch back to the Dark Side and moved all of my computers back to Windows 10 Pro from Ubuntu. My primary motivation for this was because I needed to use the Adobe Creative suite for project. After the project was completed, I just sort of stuck with Windows, largely out of complacency, but also because the Anniversary Update introduced a few really awesome features. The most useful of these was replacing the default Desktop/Documents/Pictures folders with OneDrive folders.

|

||||||

|

|

||||||

|

So, when I switched back to Ubuntu recently (mostly so I could use KDE Connect), I tried to find a OneDrive client that would do the same things. Unsurprisingly, no first-party client exists from our Microsoft overlords, and there is a stunning lack of third-party clients. However, thanks to a project called onedrive-d, I got it working. Here’s how.

|

||||||

|

|

||||||

|

(For the lazy among you, [here’s an automatic script.](https://gist.github.com/glmdev/6ab5bbdfde5da3b065eba3fb38f91c24))

|

||||||

|

|

||||||

|

## Step 1: Install onedrived

|

||||||

|

|

||||||

|

onedrived is a third-party, CLI-based OneDrive client created by Xiangyu Bu. It provides basic OneDrive sync. Clean and simple.

|

||||||

|

|

||||||

|

Install git if you don’t have it:

|

||||||

|

```shell

|

||||||

|

sudo apt install git -y

|

||||||

|

```

|

||||||

|

Clone the onedrive-d files to a hidden folder:

|

||||||

|

|

||||||

|

```shell

|

||||||

|

git clone [https://github.com/xybu/onedrived-dev.git](https://github.com/xybu/onedrive-d-old.git) ~/.odd

|

||||||

|

cd ~/.odd

|

||||||

|

```

|

||||||

|

Run the Installer:

|

||||||

|

|

||||||

|

```shell

|

||||||

|

sudo python3 ./setup.py install

|

||||||

|

```

|

||||||

|

|

||||||

|

## Step 2: Install ngrok

|

||||||

|

|

||||||

|

ngrok is a 3rd-party platform for relaying webhook integration. onedrived uses ngrok to allow webhook requests from the OneDrive API to the onedrived client without requiring port-forwarding or similar.

|

||||||

|

|

||||||

|

Download ngrok from [here](https://ngrok.com/download) and extract the ngrok file. Then, copy it to a path directory using the following command:

|

||||||

|

|

||||||

|

`sudo mv ~/path/to/extracted/ngrok /usr/bin/ngrok`

|

||||||

|

|

||||||

|

## Step 3: Configure onedrived

|

||||||

|

|

||||||

|

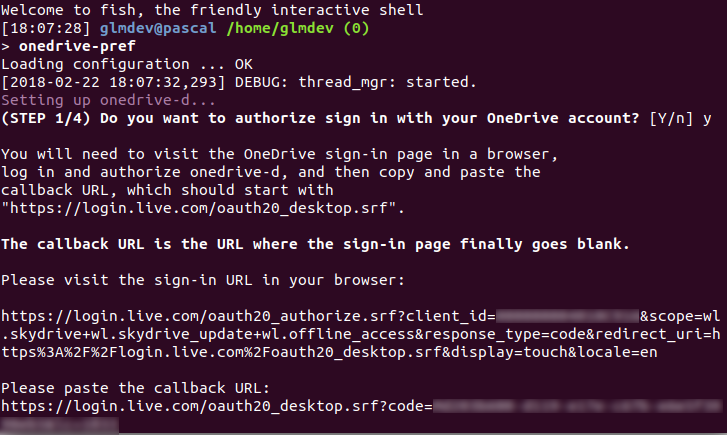

After installing onedrived, you need to authorize it to access your Microsoft account. To do this, run the following command to configure onedrived interactively:

|

||||||

|

|

||||||

|

`onedrived-pref account add`

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

This command will launch an interactive wizard to help you configure onedrived. First, copy the given URL into your browser of choice and complete the Microsoft login and permissions. After you allow onedrived access, it will land you on a blank white page. Copy the URL of this page, paste it back into the interactive prompt.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

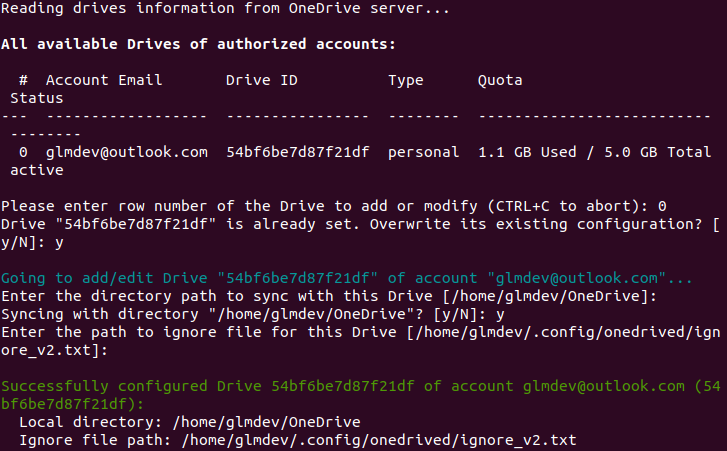

Now, you need to select which virtual drive to use with onedrived. Set the virtual drive by running the following command:

|

||||||

|

|

||||||

|

`onedrived-pref drive set`

|

||||||

|

|

||||||

|

It will run you through some interactive setup questions. Leave the default directory path the same, tell it y to sync that directory, and leave the default ignore file.

|

||||||

|

|

||||||

|

Lastly, you can start the OneDrive daemon by running:

|

||||||

|

|

||||||

|

`onedrived start`

|

||||||

|

|

||||||

|

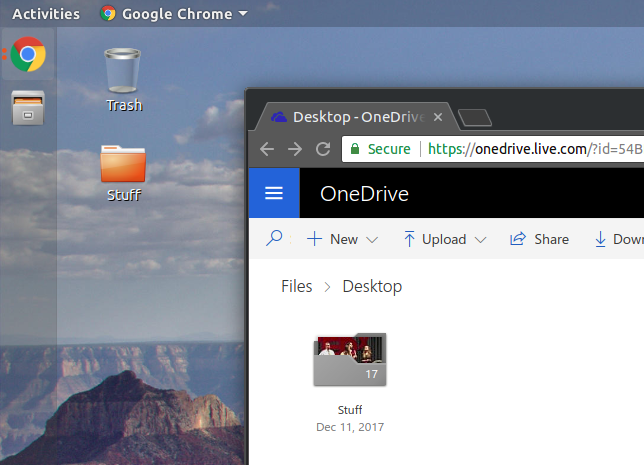

At this point, onedrived will begin syncing your OneDrive files with the local computer. This may take some time if you have a particularly large OneDrive. You can access the files by navigating to Home > OneDrive.

|

||||||

|

|

||||||

|

This, however, isn’t as seamless as Microsoft’s implementation. For that, we need to change the default folders that Nautilus uses. To do this, open the user’s directory configuration file using the following command:

|

||||||

|

|

||||||

|

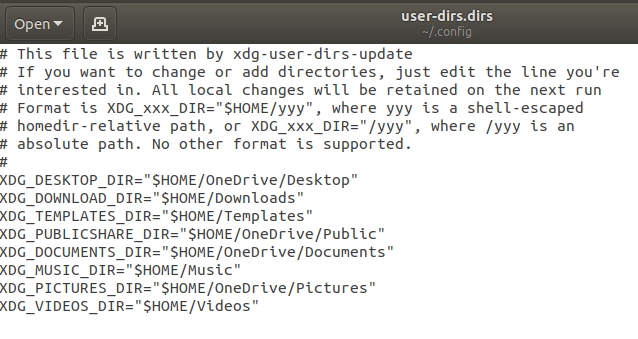

`gedit ~/.config/user-dirs.dirs`

|

||||||

|

|

||||||

|

The user-dirs.dirs file tells GNOME/Nautilus which folders to use for the default Desktop/Documents/Pictures/etc. folders. Change the default paths to their respective OneDrive folders (make sure you’ve created the folders on your OneDrive) like so:

|

||||||

|

|

||||||

|

*You can pick which folders you want to sync over OneDrive and which ones you want to use locally. In this case, I’ve chosen to sync my Desktop, Public, Documents, and Pictures folders.*

|

||||||

|

|

||||||

|

Finally, run the following command to ensure your changes are preserved:

|

||||||

|

|

||||||

|

`echo "enabled=false" > ~/.config/user-dirs.conf`

|

||||||

|

|

||||||

|

## Step 3: Enable the OneDrive Daemon on Login

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

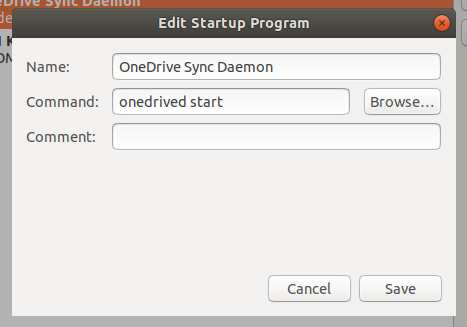

The onedrive-d daemon doesn’t automatically start by itself, so to ensure that OneDrive starts syncing automatically when you log in, we need to tell GNOME to start it on login. Run the gnome-session-properties command to open GNOME’s configuration. Then, add a startup program to run the onedrived start command.

|

||||||

|

|

||||||

|

Finally, log out and log back in to restart Nautilus and from now on, GNOME and Nautilus should seamlessly sync with your OneDrive.

|

||||||

|

|

||||||

|

|

||||||

196

src/blog/posts/2018-07-07-gluster-on-zfs.md

Normal file

196

src/blog/posts/2018-07-07-gluster-on-zfs.md

Normal file

@@ -0,0 +1,196 @@

|

|||||||

|

---

|

||||||

|

layout: blog_post

|

||||||

|

title: A Noob's Guide to Gluster on ZFS

|

||||||

|

slug: A-Noob-s-Guide-to-Gluster-on-ZFS

|

||||||

|

permalink: /blog/2018/07/07/A-Noob-s-Guide-to-Gluster-on-ZFS/

|

||||||

|

tags: blog

|

||||||

|

date: 2018-07-07 01:00:00

|

||||||

|

blogtags:

|

||||||

|

- gluster

|

||||||

|

- zfs

|

||||||

|

- linux

|

||||||

|

- tutorial

|

||||||

|

---

|

||||||

|

|

||||||

|

# A Noob’s Guide to Gluster on ZFS

|

||||||

|

|

||||||

|

I run a Dell PowerEdge R710 with effectively 680GB of RAID storage. However, over the years, I’ve added on to my network, and as a result have needed more storage. My haphazard solution for this was to just add additional storage machines I have lying around for things like the network backup server and media storage, but this isn’t very… elegant. Aside from the fact that my data are not centrally accessible, it also means that I have to have between 3 and 5 network shares mounted at any given point.

|

||||||

|

|

||||||

|

So, I embarked on a mission to combine my various network storage boxes into one big SAMBA share using GlusterFS. But, I found that the documentation for getting into this, especially for we beginners, is a bit sparse, so I decided to chronicle my journey here.

|

||||||

|

|

||||||

|

## Part 1: The Hardware

|

||||||

|

|

||||||

|

Before we can begin software-linking storage together, we need to have physical storage in the first place. Luckily, Gluster and ZFS are pretty flexible with the hardware they can utilize, so there’s *some *leniency in designing your system. Here’s mine:

|

||||||

|

|

||||||

|

### Server 1: Violet

|

||||||

|

|

||||||

|

Violet is the original NAS. It has 6x146GB SAS drives running in an actual, physical, yes-they-still-exist hardware RAID. I will be including this server by creating a folder in the RAID volume and adding that to the GlusterFS volume.

|

||||||

|

|

||||||

|

### Server 2: Archimedes

|

||||||

|

|

||||||

|

Archimedes is an old HP tower that I’d formerly re-purposed as a media server. Aside from its 80GB boot disk, it has 3x250GB hard drives running in it, which we will be using with ZFS.

|

||||||

|

|

||||||

|

### Server 3: Newton

|

||||||

|

|

||||||

|

Newton is also fashioned from an old HP tower I had lying around. The drive setup here is a bit interesting, though. It too has an 80GB boot drive, but instead of 3x250GB drives, it has 2x250GB drives and 1x320GB drive. This is mostly because I’m cheap and didn’t want to buy another 250GB drive. The beauty of ZFS, however, is that it can use mismatched drive sizes, but each drive behaves as though it were the capacity of the smallest drive. So, it will effectively become a 3x250GB setup, but more on that later.

|

||||||

|

|

||||||

|

## Part 2: The OS

|

||||||

|

|

||||||

|

There has been a lot of drama in the GNU/Linux community in the past over the state of ZFS. Operated by Oracle, for a period around the mid-2010s, ZFS only *really* ran well on Oracle’s server OS, Solaris. The Linux versions of ZFS were buggy, out-of-date, and generally not very reliable. However, in recent years, thanks to a community effort to further develop the software, ZFS support on traditional Linux platforms has become basically indistinguishable from Solaris.

|

||||||

|

|

||||||

|

Which is why, for this project, we’ll be installing Ubuntu Server 18.04 LTS on all the machines. It supports the latest versions of both Gluster and ZFS, and the long-term support ensures stability and updates for years to come.

|

||||||

|

|

||||||

|

So, do a fresh install of Ubuntu Server on each machine’s boot disk (*not* the ZFS storage disks), run updates, and let’s get to the fun stuff.

|

||||||

|

|

||||||

|

One thing to note is that, during the Ubuntu Server install, **I highly recommend setting *static *IP addresses for the different servers** so they don’t change between reboots. This can mess up Gluster’s ability to find the other servers.

|

||||||

|

|

||||||

|

## Part 3: ZFS!

|

||||||

|

|

||||||

|

Now for the fun part. I imagine if you made it this far, you probably have some idea of how ZFS works, but here’s a quick rundown. ZFS is a software RAID-style storage utility that makes use of physical drives to create cross-disk redundant storage pools.

|

||||||

|

|

||||||

|

First, we need to install ZFS. On Ubuntu, this is as simple as running:

|

||||||

|

|

||||||

|

`sudo apt install zfsutils-linux -y`

|

||||||

|

|

||||||

|

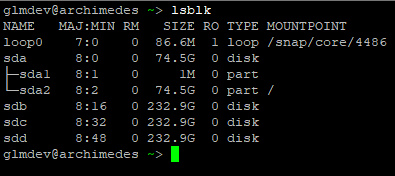

Now, we need to identify the drives we are going to use. To do this, log (or SSH) in to the first server and run lsblk to view the drives.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Using the output, identify the drive names for the ZFS storage drives. In my case, these are /dev/sdb, /dev/sdc, and /dev/sdd.

|

||||||

|

|

||||||

|

Next, we have to decide what sort of redundancy to use. In my case, I want to use a 1-drive redundancy on each individual server, then use Gluster to pool all the storage together. This means that, on any server, a single drive can fail, and the whole system will continue to run, but the Gluster pool has no server-by-server redundancy (meaning that a failed server will make at least some of the Gluster data inaccessible). This obviously isn’t the most data-secure system, but for my purposes, it’s “good enough” that I’m not concerned in the day-to-day.

|

||||||

|

|

||||||

|

### Creating the ZFS Pool

|

||||||

|

|

||||||

|

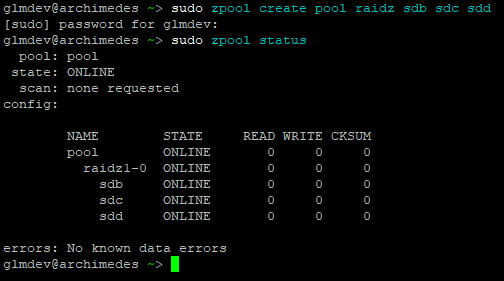

Now we can create the actual ZFS pool. We are going to be using ZFS’ RAID-Z format. This is a single-drive parity format that will give us 1 drive of redundancy. (for my 3x250GB machines, this amounts to about 465GB of usable space) To do this, run:

|

||||||

|

|

||||||

|

`sudo zpool create pool raidz sdb sdc sdd`

|

||||||

|

|

||||||

|

Where “pool” is the name of the pool (you can name it whatever you want), “raidz” is the format, and that is followed by our drives. The zpool command always has to be run as root.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

After creating the pool, run sudo zpool status to view (*gasp*) the status. This is also how you can check if drives in the pool have failed.

|

||||||

|

|

||||||

|

By default, ZFS mounts the pool in the root directory. So, in my case, the ZFS pool is mounted at /pool.

|

||||||

|

|

||||||

|

Repeat this process, creating ZFS pools, for each of the servers you intend to use in the Gluster volume.

|

||||||

|

> Note: if you are using drives of different sizes, the zpool command will complain about it. To override this, pass it the -f argument like so:

|

||||||

|

> `sudo zpool create pool raidz sdb sdc sdd -f`

|

||||||

|

|

||||||

|

## Part 4: Gluster

|

||||||

|

|

||||||

|

Finally! We can install Gluster and get our monster network drive online! Well, almost. First we have to do some…

|

||||||

|

|

||||||

|

### Pre-configuration

|

||||||

|

|

||||||

|

Gluster relies on resolvable host-names to find the other servers it needs to talk to. This means that when you run ping server1 or whatever the name of your server is, it needs to actually resolve to that server’s IP address. This is why I suggested setting static IP addresses during the OS install.

|

||||||

|

|

||||||

|

There are a few ways of achieving this. For my network, I am using a local-network DNS server. I just manually assigned the host-names to the static IP addresses, and since all the servers use the local DNS server to resolve names, they can find each other. If you don’t have a local DNS server, don’t fret, there is another way.

|

||||||

|

|

||||||

|

We are going to edit the /etc/hosts files on each server to map the host names of the other servers to their IP addresses. On each server, open the hosts file in your favorite editor of choice, like so:

|

||||||

|

|

||||||

|

*Note: you don’t need to add the address of the server the /etc/hosts file resides on.*

|

||||||

|

|

||||||

|

`sudo nano /etc/hosts`

|

||||||

|

|

||||||

|

Add the storage server’s static IP addresses, as well as their host names to this file. Save it, close it, and repeat this process for each server you intend to put in the Gluster volume.

|

||||||

|

|

||||||

|

Now, we need to install the latest GlusterFS software.

|

||||||

|

|

||||||

|

I’ve found that the version of Gluster that is packages in the Ubuntu 18.04 LTS repositories is outdated (largely because the Canonical team refuses to consult the Gluster team on version choices, but whatever). To address this, we’re going to add the [Gluster PPA](https://launchpad.net/~gluster/+archive/ubuntu/glusterfs-4.1) before installing the GlusterFS software.

|

||||||

|

|

||||||

|

`sudo add-apt-repository ppa:gluster/glusterfs-4.1 && sudo apt update`

|

||||||

|

|

||||||

|

As of July 2018, GlusterFS 4.1 is the latest build for Ubuntu. Now, we can install the software:

|

||||||

|

|

||||||

|

`sudo apt install glusterfs-server -y`

|

||||||

|

> Note: if, like me, one of your servers is using hardware RAID, simply create a folder in the root drive of that server and mount the RAID drive to that folder. Gluster may complain about it, but it will work.

|

||||||

|

|

||||||

|

### Creating the Gluster Volume

|

||||||

|

|

||||||

|

GlusterFS relies on “peers” to access and store data across servers. To set this up, we need to connect the peers together.

|

||||||

|

> Note: Unless otherwise noted, the rest of the commands from here on only need to be run on one of the servers in the Gluster group, not all of them.

|

||||||

|

|

||||||

|

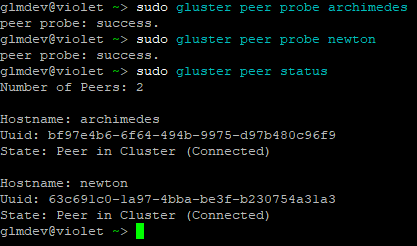

Add the peers by running this command once for each of the servers:

|

||||||

|

|

||||||

|

`sudo gluster peer probe <server hostname>`

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Then, you can check to make sure the peers were added and joined the cluster by running:

|

||||||

|

> `sudo gluster peer status`

|

||||||

|

> Note: if you are having problems adding peers, try disabling the firewall: `sudo ufw disable`

|

||||||

|

|

||||||

|

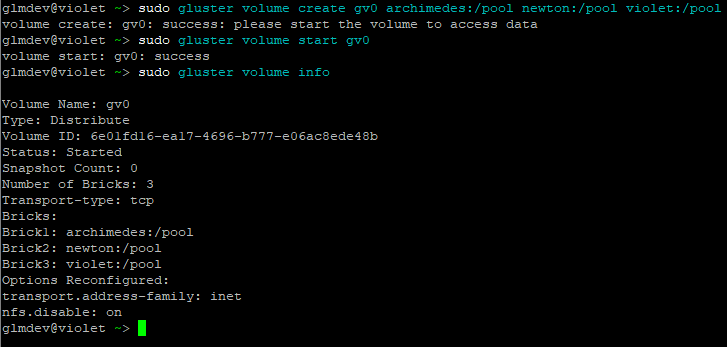

Next, create and then start the actual GlusterFS Volume like so:

|

||||||

|

|

||||||

|

`sudo gluster volume create gv0 server1:/pool server2:/pool`

|

||||||

|

|

||||||

|

`sudo gluster volume start gv0`

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

After starting the volume, check its status by running:

|

||||||

|

|

||||||

|

`sudo gluster volume info`

|

||||||

|

|

||||||

|

And success! At last, we have our GlusterFS volume up and running.

|

||||||

|

|

||||||

|

## Step 5: Setting Up SAMBA Access

|

||||||

|

|

||||||

|

While we have our GlusterFS volume, we don’t have an easy way to access or use it. So, we’re going to set up a no-permissions SMB share for clients on the network.

|

||||||

|

> Note: I know this isn’t the most secure, but again, for my network it’s “good enough.” Feel free to customize your SMB permissions.

|

||||||

|

|

||||||

|

### Mounting the GlusterFS Volume

|

||||||

|

|

||||||

|

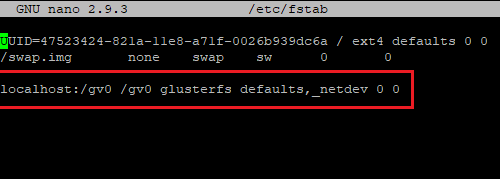

First, let’s mount the GlusterFS volume to a folder. To do this, we’re going to edit /etc/fstab to include the following line:

|

||||||

|

|

||||||

|

`localhost:/gv0 /gv0 glusterfs defaults,_netdev 0 0`

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

This will permanently mount the GlusterFS volume gv0 attached to localhost to the /gv0 folder. Now, refresh the mounts by running sudo mount -a.

|

||||||

|

> Note: you’ll need to create /gv0.

|

||||||

|

|

||||||

|

### SAMBA

|

||||||

|

|

||||||

|

Install SAMBA on the server with the mounted volume:

|

||||||

|

|

||||||

|

`sudo apt install samba -y`

|

||||||

|

|

||||||

|

Now we need to modify the permissions of the directory to allow guests write access:

|

||||||

|

|

||||||

|

`sudo chown nobody.nogroup -R /gv0`

|

||||||

|

|

||||||

|

`sudo chmod 777 -R /gv0`

|

||||||

|

|

||||||

|

Then create the share by adding this to the end of /etc/samba/smb.conf:

|

||||||

|

|

||||||

|

[gluster-drive]

|

||||||

|

browseable = yes

|

||||||

|

path = /gv0

|

||||||

|

guest ok = yes

|

||||||

|

read only = no

|

||||||

|

create mask = 777

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Test the syntax by running testparm, and provided everything passed the syntax check, restart SAMBA by running:

|

||||||

|

|

||||||

|

`sudo service smbd restart ; sudo service nmbd restart`

|

||||||

|

|

||||||

|

And that’s it!

|

||||||

|

|

||||||

|

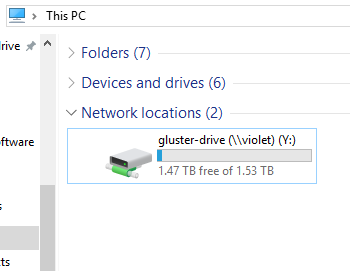

## Success!

|

||||||

|

|

||||||

|

We have now successfully created a GlusterFS volume on ZFS nodes, and mounted it for the network to enjoy!

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

You should now be able to mount your new drive over the network and have read/write access to it.

|

||||||

|

|

||||||

|

To stop the Gluster volume, run sudo gluster volume stop gv0 on any of the nodes before shutting them down.

|

||||||

|

|

||||||

|

To restart the volume, start up all of the nodes, then run sudo gluster volume start gv0. Don’t forget to sudo mount -a and restart SAMBA.

|

||||||

|

|

||||||

|

This has been an incredibly fun project to undertake. If you have any comments, questions, or if you’re having problems getting it to work, leave a comment below!

|

||||||

143

src/blog/posts/2018-07-11-pfsense-on-esxi.md

Normal file

143

src/blog/posts/2018-07-11-pfsense-on-esxi.md

Normal file

@@ -0,0 +1,143 @@

|

|||||||

|

---

|

||||||

|

layout: blog_post

|

||||||

|

title: How to Set Up Virtualized pfSense on VMware ESXi 6.x

|

||||||

|

slug: Host-to-Set-Up-Virtualized-pfSense-on-VMware-ESXi-6-x

|

||||||

|

date: 2018-07-11 00:01:00

|

||||||

|

tags: blog

|

||||||

|

permalink: /blog/2018/07/11/Host-to-Set-Up-Virtualized-pfSense-on-VMware-ESXi-6-x/

|

||||||

|

blogtags:

|

||||||

|

- tutorial

|

||||||

|

- networking

|

||||||

|

- virtualization

|

||||||

|

---

|

||||||

|

|

||||||

|

For the longest time, my router/firewall solution has been a Raspberry Pi 3 with a USB network dongle running dnsmasq. While this worked well enough, it didn’t offer much by way of advanced configuration (at least not easily), and the lack of a GUI was often a pain. Plus, I wanted my Raspberry Pi back. So, in this saga, we’ll be taking a look at how to create a virtualized firewall by running pfSense on VMware ESXi.

|

||||||

|

|

||||||

|

## What We’ll Be Building

|

||||||

|

|

||||||

|

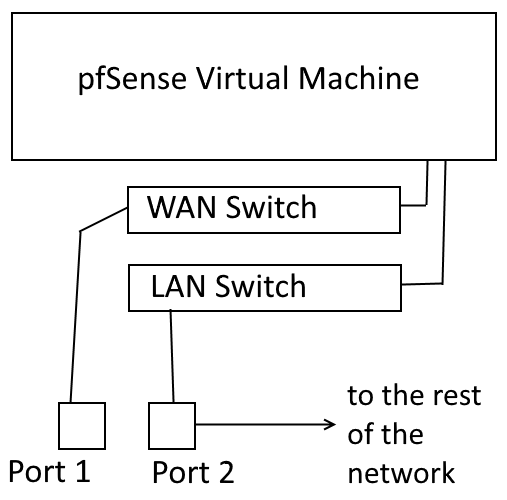

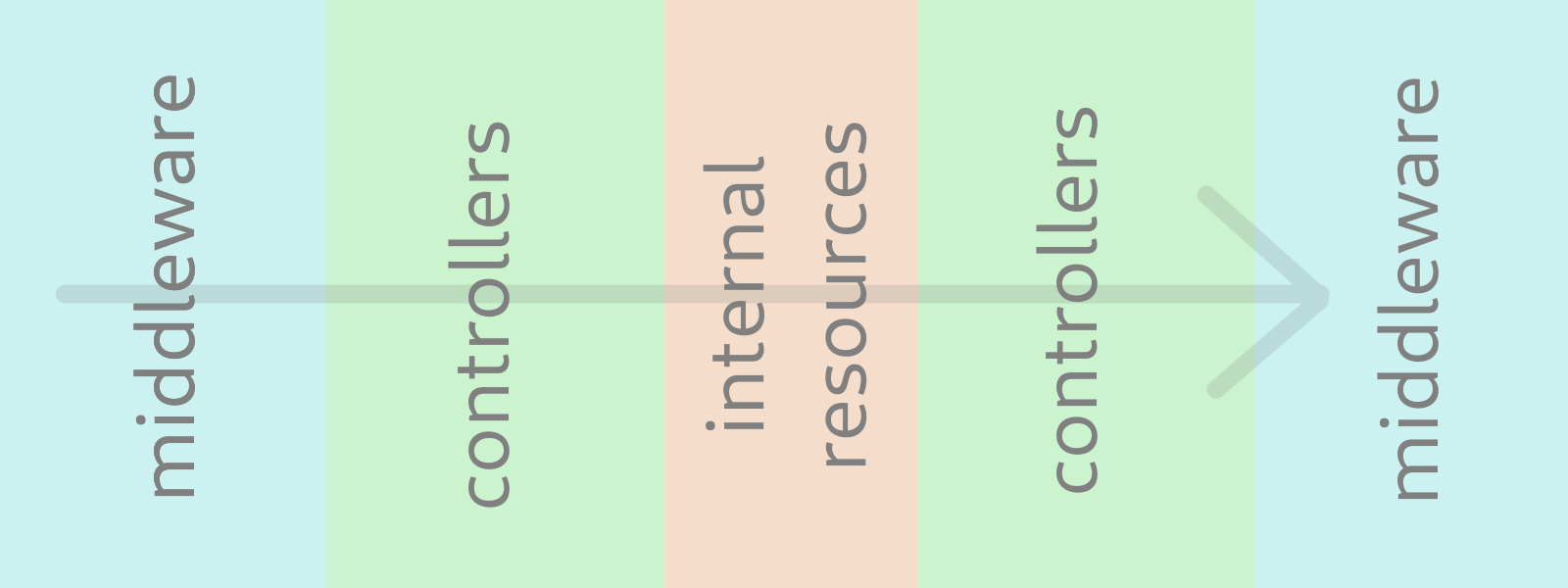

I like visual diagrams, so to kind of lay out what we’ll be doing, here’s a crudely MS Paint-ed diagram of how the virtual network will function:

|

||||||

|

|

||||||

|

*my 3rd grade computer teacher would be proud*

|

||||||

|

|

||||||

|

## Step 1: Requirements

|

||||||

|

|

||||||

|

So, for this project, you need a couple things. First, you need a server running VMware ESXi 6.x. Importantly, that server should have at least 2 network ports. That will enable us to have a WAN and a LAN port. For this, I’ll be using my Dell PowerEdge R710, which has 4x1GbE ports on the back, and it runs VMware ESXi 6.5.0.

|

||||||

|

> Note: With some finagling, the same result can be achieved with a single NIC using VLANs, as long as your switches/infrastructure support it. This, however, is beyond the scope of this tutorial.

|

||||||

|

|

||||||

|

Obviously, you will also need a WAN connection, as well as a switch or device to connect to the LAN port.

|

||||||

|

> Learn from my mistakes: Don’t forget to download pfSense *before* you start reconfiguring your internet connection…

|

||||||

|

|

||||||

|

## Step 2: Configuring ESXi & Management Connection

|

||||||

|

|

||||||

|

To achieve our virtual firewall, we’re going to create 2 virtual port-groups in ESXi: one for the WAN connection, and one for the LAN connection and add a physical port to each of those groups.

|

||||||

|

|

||||||

|

### Create The Virtual Switch(es)

|

||||||

|

|

||||||

|

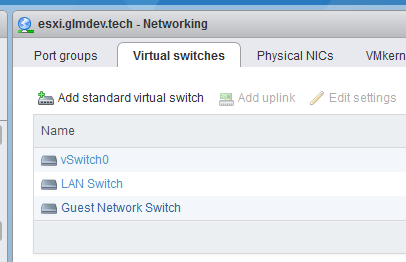

We need to add the virtual switch for our LAN connection, then assign it to a physical port. Do this by logging into the ESXi interface and accessing Networking > Virtual Switches > Add standard virtual switch.

|

||||||

|

|

||||||

|

*Additional interfaces for the firewall should be added here.*

|

||||||

|

|

||||||

|

Name the switch something meaningful and assign it a physical port.

|

||||||

|

|

||||||

|

For the WAN switch, we’re going to use the built-in “VM Network” switch. If you want any additional network ports for the firewall, add them now.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

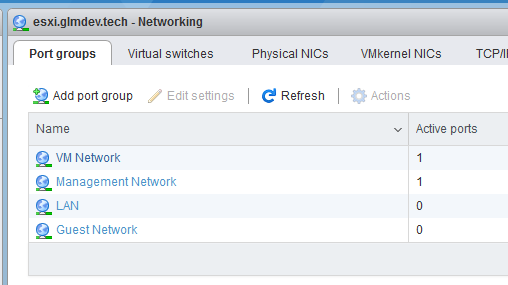

Now, we need to create “port groups” for the new switches. Open the Port Groups tab and create port groups for each of the new switches you added.

|

||||||

|

|

||||||

|

### The Management Connection

|

||||||

|

|

||||||

|

During most of this process, your computer will not have internet access, or DHCP service. This means that, in order to maintain access to the ESXi interface, we need to set static IP addresses on both the ESXi management interface and the computer we’re working from.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

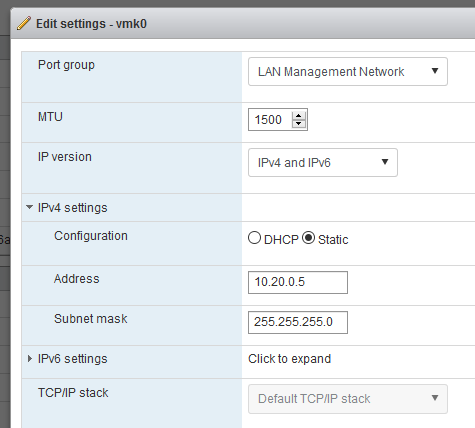

From the networking tab, access VMkernel NICs > vmk0 > Edit Settings > IPv4 settings

|

||||||

|

|

||||||

|

Set a static IP address that is within the address space of the new network. (i.e. if the new network is 10.20.0.1– 10.20.0.255, I picked 10.20.0.5)

|

||||||

|

|

||||||

|

Save and Apply the settings. Then, open your computer’s network settings and set its static IP to something in the same address space, and set the IP of the ESXi host as the gateway. **This will disrupt internet/intranet communications, but it will ensure continual access to the ESXi interface.**

|

||||||

|

|

||||||

|

## Step 3: Installing pfSense

|

||||||

|

> In point of fact, this can really be done with any firewall/router software you want to use (IPFire/OPNsense/routerOS/etc), I just chose pfSense.

|

||||||

|

|

||||||

|

Create a new virtual machine, and, for pfSense, select OS family: Other and set the OS to “FreeBSD (64-bit).”

|

||||||

|

|

||||||

|

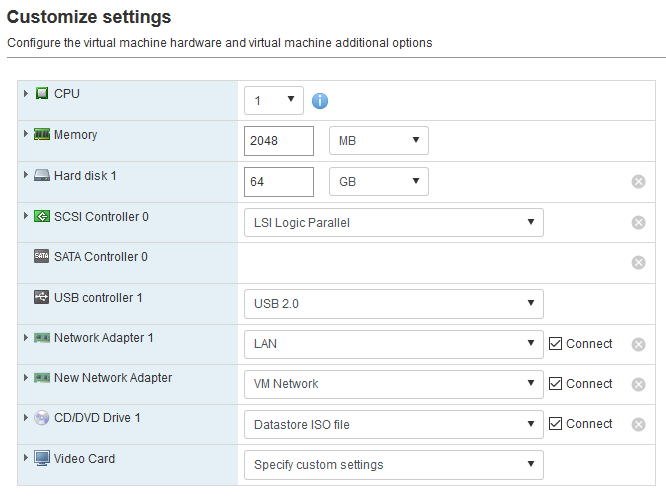

Tab through the wizard until you land on the VM’s configuration page. Here we need to modify a few things.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Add an additional Network adapter using the button at the top, and then select the LAN port-group we created earlier. You should have a network adapter with a WAN port-group by default.

|

||||||

|

|

||||||

|

Then, in the CD/DVD drive, select the pfSense installer ISO from the datastore. Now you can click create and start the VM.

|

||||||

|

|

||||||

|

### pfSense Install

|

||||||

|

|

||||||

|

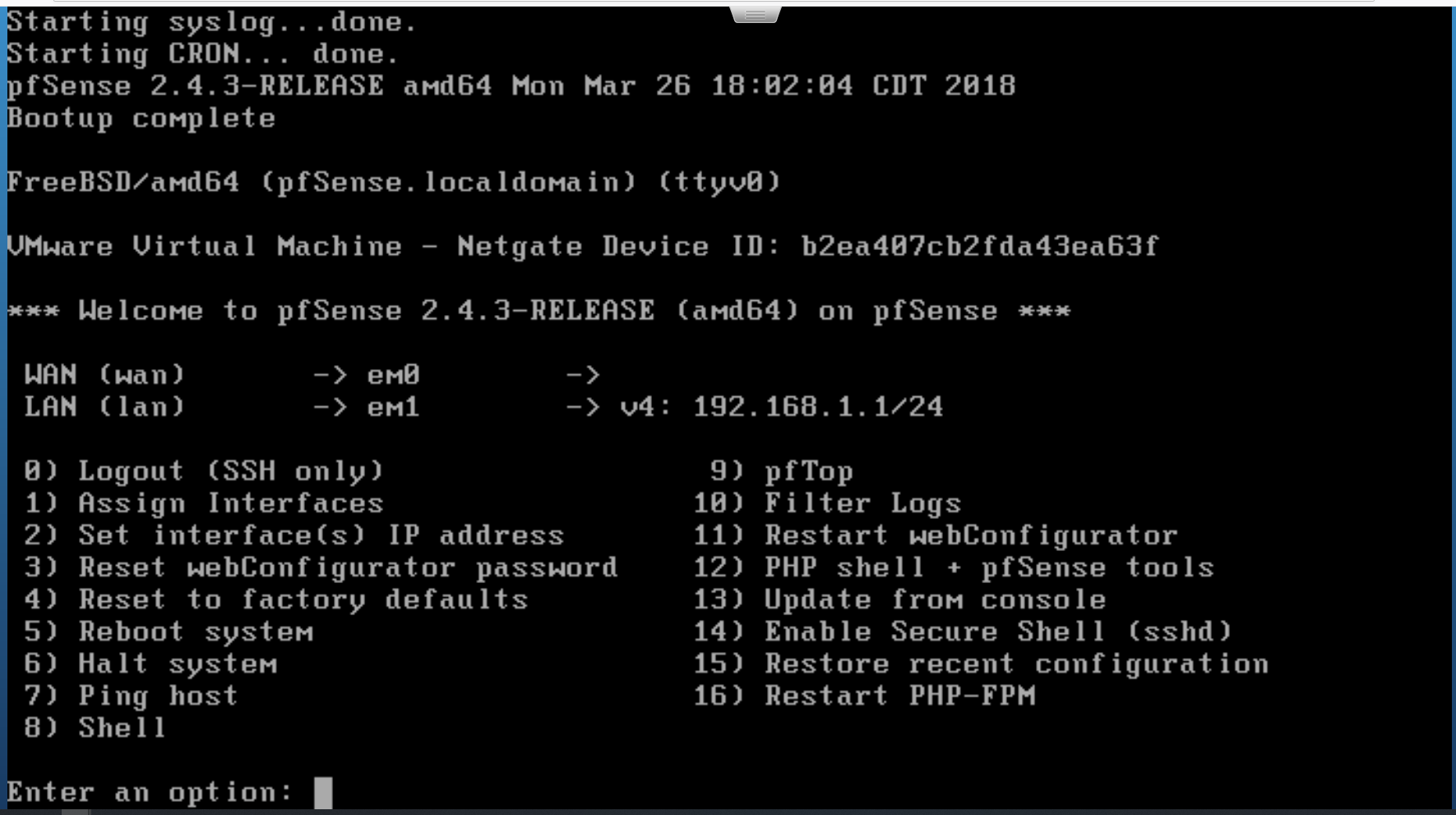

The basic installation of the pfSense operating system is nearly identical to installing it on bare-metal. Just click through the installer until the system reboots and you land on the set-up page.

|

||||||

|

|

||||||

|

*the pfSense setup page*

|

||||||

|

|

||||||

|

To make things easier on ourselves, we’re going to pre-configure a few things from here before opening the web interface. First, we need to set up the WAN interface. Even though my WAN doesn’t have DHCP, pfSense was able to automatically select which interfaces are supposed to be which.

|

||||||

|

|

||||||

|

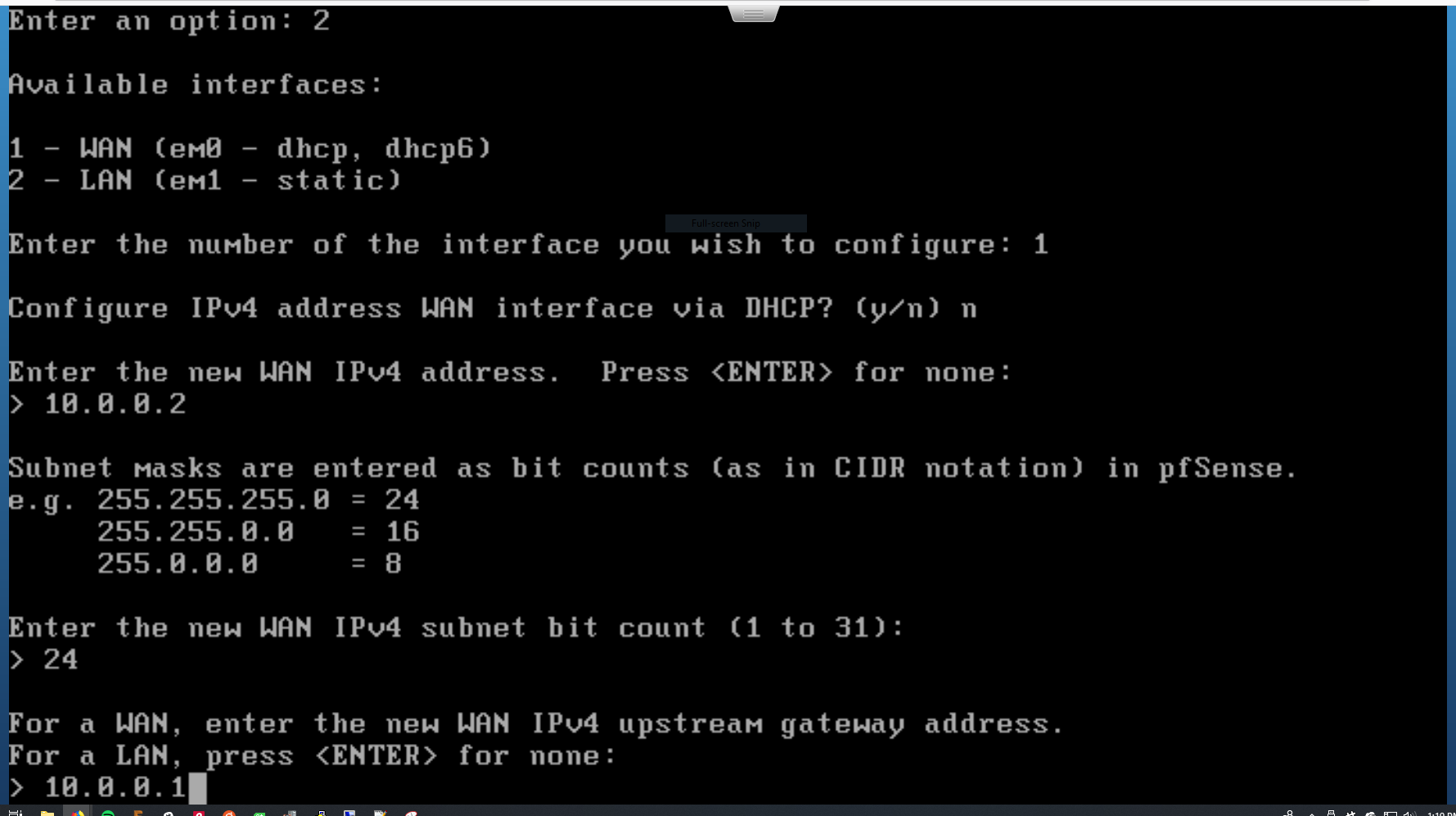

Select option 2 to Set interface(s) IP address.

|

||||||

|

|

||||||

|

*configuring the WAN interface*

|

||||||

|

|

||||||

|

This will launch an interactive wizard that will help you configure an interface. Select the WAN interface to configure, then just run through the wizard.

|

||||||

|

|

||||||

|

For me, this involved setting the static IP address of the port and pointing it toward my gateway.

|

||||||

|

|

||||||

|

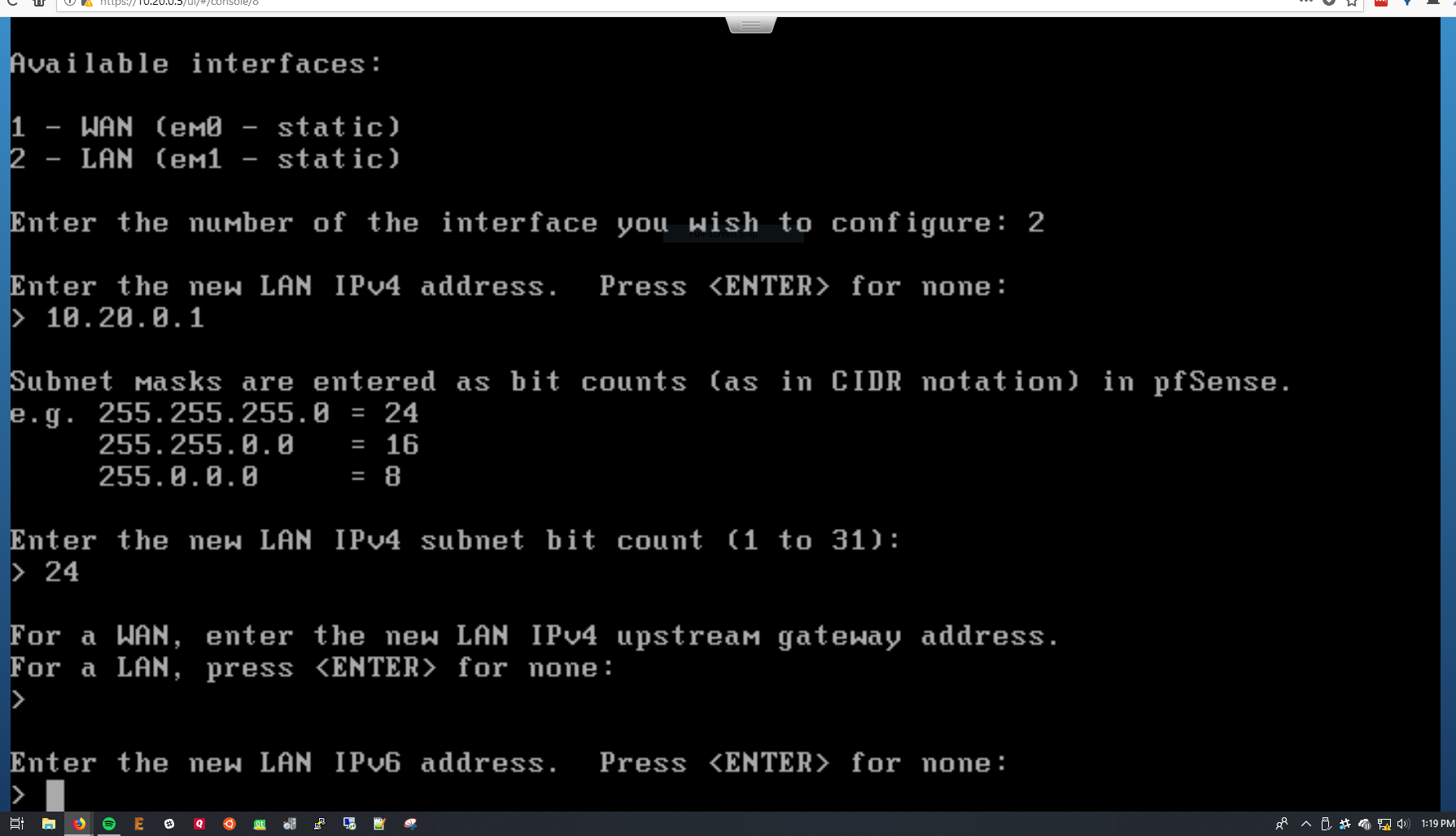

*configuring the LAN interface*

|

||||||

|

|

||||||

|

Now, we repeat the process for the LAN interface. Here you’ll be prompted to set the static IP again, as well as selecting a DHCP range for pfSense’s LAN port. Make sure this is the same address space as you planned earlier (for me, 10.20.0.X).

|

||||||

|

|

||||||

|

Select y to enable the DHCP server, and press enter to save.

|

||||||

|

|

||||||

|

## Step 4: Moving to the New Network

|

||||||

|

|

||||||

|

We now officially have a virtual firewall running, but before we can start using the new network, there are a few configuration things to modify.

|

||||||

|

|

||||||

|

### The Management Network (reprise)

|

||||||

|

|

||||||

|

In order to access our ESXi host from the new network, we need to move the vmk0 management interface over to the LAN switch we created earlier. However, we can’t just add it to the existing LAN port group (ESXi hates that, for some reason), so we’ll create a new port group. Access Networking > Port Groups > New Port Group.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

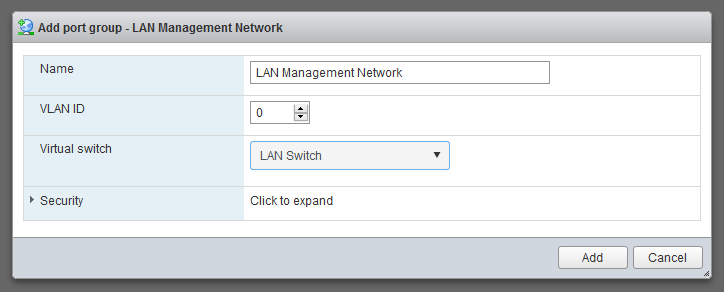

Create a new LAN Management Network port group on the same LAN switch we created earlier.

|

||||||

|

|

||||||

|

This will make sure it bridges with the LAN port on the new pfSense VM.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

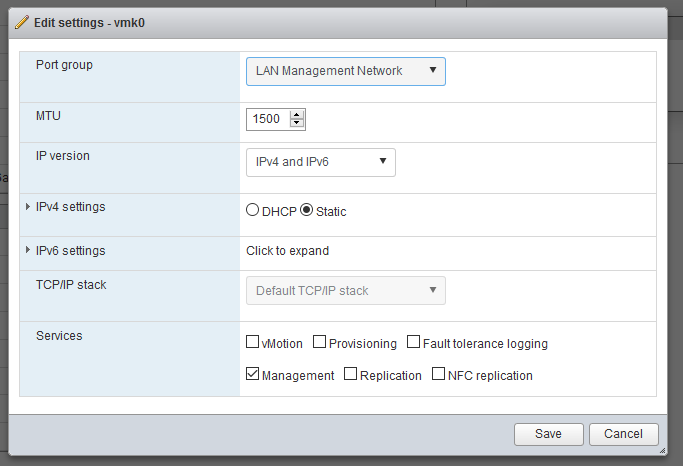

Now, go to Networking > VMkernel NICs > Edit Settings and change the port group to the new LAN Management Network port group we just created.

|

||||||

|

|

||||||

|

Note that after you save the settings, you’ll no longer be able to access the ESXi interface. This is normal.

|

||||||

|

|

||||||

|

### Move It Over!

|

||||||

|

|

||||||

|

Now, we can finally start using our new network! Move the connection to the switch (or the rest of your physical network) to the new port on the server that we added to the LAN switch we created at the beginning. This will connect your network to the pfSense LAN interface.

|

||||||

|

|

||||||

|

Finally, reset your computer’s IP address back to DHCP so it will pick up an address and DNS from pfSense.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

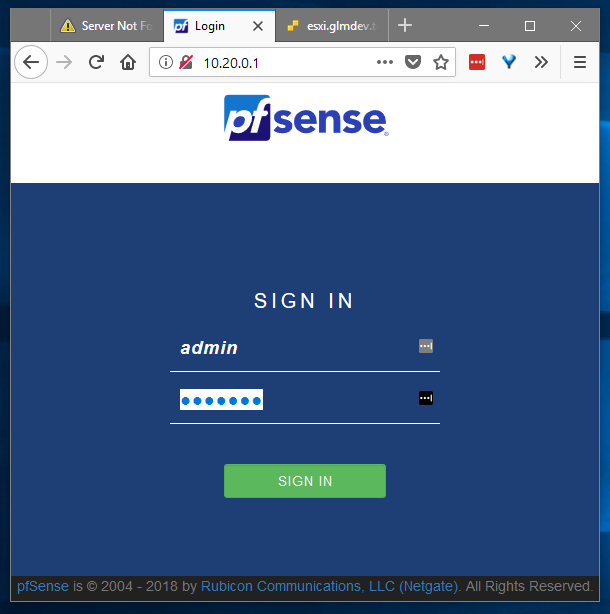

Open up your web browser of choice and navigate to the IP address you assigned to the pfSense LAN interface in Step 3, and **voila!** You should be presented with the pfSense web interface.

|

||||||

|

|

||||||

|

Log in using the default credentials:

|

||||||

|

|

||||||

|

admin

|

||||||

|

pfsense

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

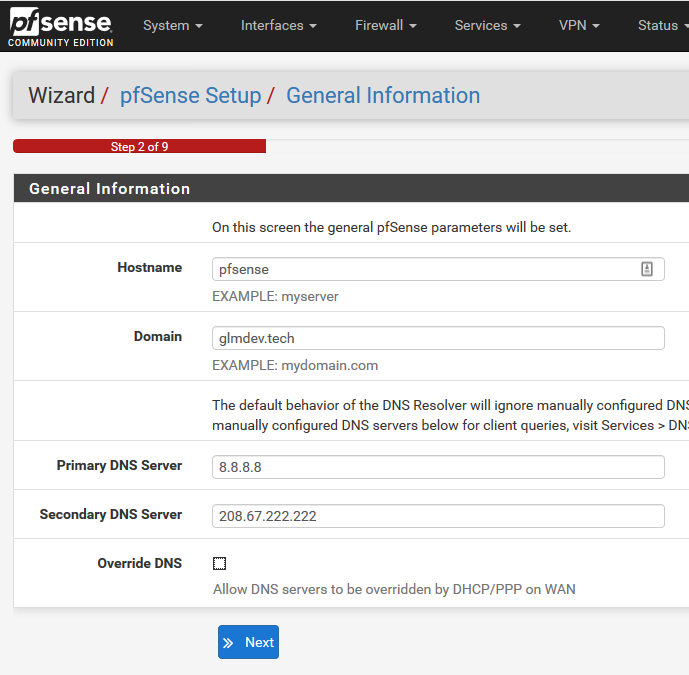

Now it’s just a matter of running through the standard pfSense setup wizard and you’re off to the races!

|

||||||

|

|

||||||

|

pfSense will behave exactly like it would if it were installed on a physical box. This means that everything from DNS to VLANs to captive portal will behave normally.

|

||||||

|

|

||||||

|

Plus, you should still be able to access the ESXi host. :)

|

||||||

|

|

||||||

|

## Conclusion

|

||||||

|

|

||||||

|

Virtualized networking is a great way to experiment with different firewall software without having your network down for long periods of time. It also lends a lot of flexibility when it comes to customizing network segments.

|

||||||

|

|

||||||

|

I hope this guide has been helpful, and if you have any questions, comments, or if you run into any issues, leave a comment down below, and I’ll do my best to help!

|

||||||

|

|

||||||

122

src/blog/posts/2018-08-06-free-www.md

Normal file

122

src/blog/posts/2018-08-06-free-www.md

Normal file

@@ -0,0 +1,122 @@

|

|||||||

|

---

|

||||||

|

layout: blog_post

|

||||||

|

title: Creating the Ultimate (Free) Personal Website

|

||||||

|

slug: Creating-the-Ultimate-Free-Personal-Website

|

||||||

|

date: 2018-08-06 00:01:00

|

||||||

|

tags: blog

|

||||||

|

permalink: /blog/2018/08/06/Creating-the-Ultimate-Free-Personal-Website/

|

||||||

|

blogtags:

|

||||||

|

- tutorial

|

||||||

|

- webdev

|

||||||

|

- hosting

|

||||||

|

---

|

||||||

|

|

||||||

|

For years, I’ve been roaming the web trying to find a good, free way to host my personal website. I’m a cheapskate, so I really didn’t want to pay for it…

|

||||||

|

|

||||||

|

After trying numerous “free tiers,” sketchy shared-hosting services, and overly-complicated free cloud options, I finally settled on what I consider to be the ultimate, secure, and free (as in cookies, and as in Freedom) hosting solution: GitHub pages + CloudFlare. In this tutorial, we’ll take a look at how to set it up.

|

||||||

|

|

||||||

|

## Step 0: Prerequisites

|

||||||

|

|

||||||

|

### 0.1 — You need a website.

|

||||||

|

|

||||||

|

](https://cdn-images-1.medium.com/max/2224/1*OZfWkxG6qE3CSzCqwf9EiA.png)*[glmdev.tech](https://glmdev.tech/)*

|

||||||

|

|

||||||

|

The first step in all of this, of course, is to actually have a website to host. So, create your personal website using good ole-fashioned static web development (HTML/CSS/JS/etc).

|

||||||

|

|

||||||

|

My site is a simple, one page Bootstrap ordeal. Save for Bootstrap and jQuery, it has only 4 lines of JavaScript.

|

||||||

|

|

||||||

|

### 0.2 — Get a custom domain name (optional).

|

||||||

|

|

||||||

|

While it’s not *required*, having a custom domain name makes your online presence seem somehow more… official. You can get .com domain names for super cheap from sites like [Google Domains](https://domains.google/).

|

||||||

|

|

||||||

|

## Step 1: Setting Up GitHub Pages

|

||||||

|

|

||||||

|

GitHub has an *awesome* free static hosting service called GitHub Pages. Essentially, it allows you to push your static site to a repository and GitHub will automatically host that site for free. forever. The only potential downside is that the source-code for your website will be available for anyone to view.

|

||||||

|

|

||||||

|

### 1.1 — Sign-in to GitHub and create a repo.

|

||||||

|

|

||||||

|

For the purposes of this tutorial, I’m going to assume you know [how Git & GitHub work](https://www.youtube.com/watch?v=AGO9nQTKkfI&list=PLIaeD4fuWZJZ8Kb0wf_mWl52R8DjTA1og).

|

||||||

|

|

||||||

|

Sign in to GitHub, and create a new repository. **This repo must be named in a special way to enable GitHub Pages.** It must take the following form:

|

||||||

|

|

||||||

|

<your github username>.github.io

|

||||||

|

|

||||||

|

So mine, for example, is called glmdev.github.io. This tells GitHub to activate GitHub pages. Now, on your local machine, add the repository as a remote and push your code to the master branch.

|

||||||

|

|

||||||

|

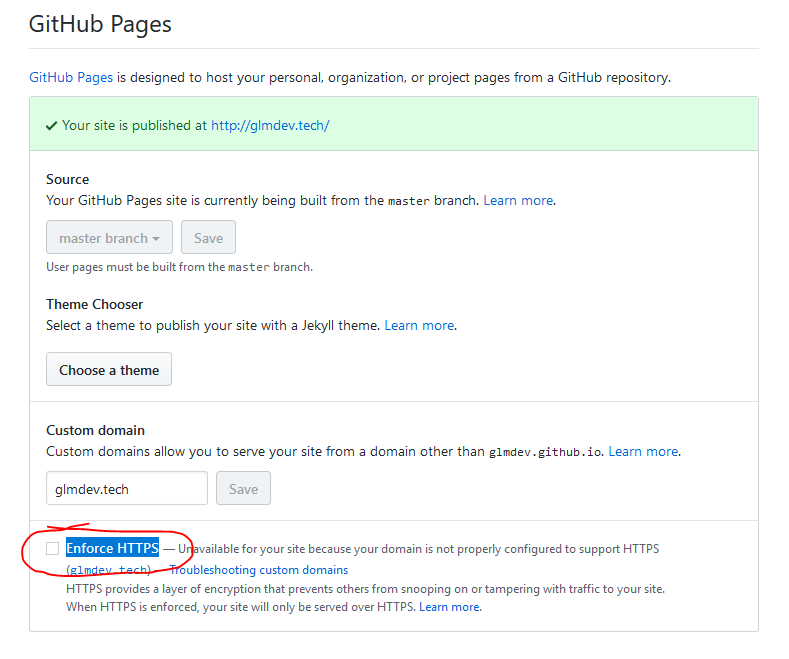

### 1.2 — Force clients to use HTTPS. (optional, but not really…)

|

||||||

|

|

||||||

|

**If you’re using a custom domain, this is not for you.**

|

||||||

|

|

||||||

|

If you’re not using a custom domain, you’re technically done at this point. You have a working static website. But, in the name of best practice, we are going to redirect non-secure clients to the HTTPS protocol by default.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

This can be done by going to the repository settings page, scrolling down to the GitHub pages section, and checking the “Enforce HTTPS” box.

|

||||||

|

|

||||||

|

By default, GitHub provides free SSL certificates for their *.github.io domains.

|

||||||

|

|

||||||

|

That’s it! If you don’t have a custom domain, you’re done. Congrats, and happy interneting!

|

||||||

|

|

||||||

|

## Step 2: Setting Up CloudFlare

|

||||||

|

|

||||||

|

CloudFlare is an amazing, free service that provides web caching, always-on, and SSL enforcement for websites. It also has a host of other features that you can tinker with. So, we’re going to be setting up CloudFlare to use with our custom domain.

|

||||||

|

|

||||||

|

### 2.1 — Create a CloudFlare account.

|

||||||

|

|

||||||

|

Head over to CloudFlare and sign up for an account, then click the add site button. Put in your custom domain name here.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

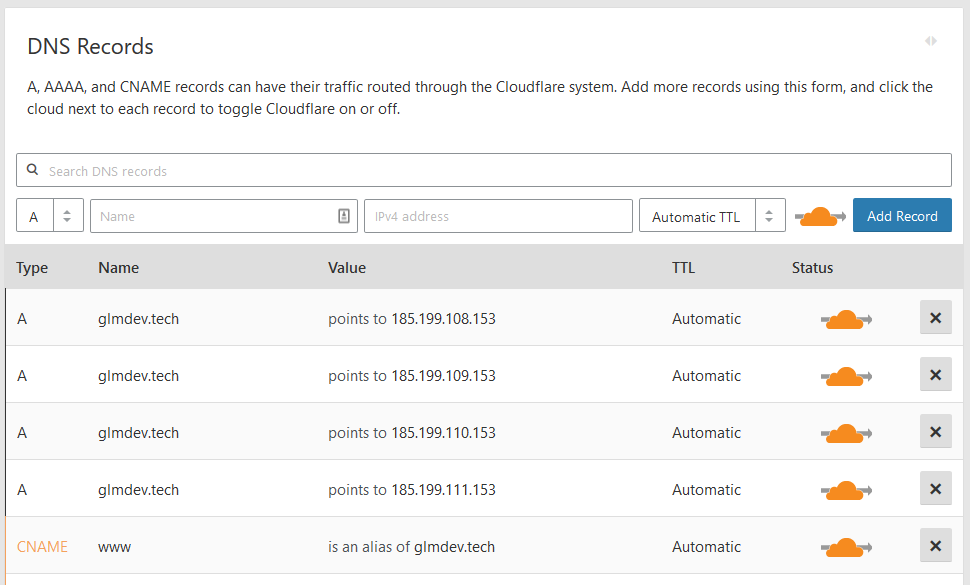

CloudFlare will then probe your existing DNS settings to try to import them. It’s important that you sign-in to your registrar and add any other DNS records you have (like MX mail records and TXT records) because after the setup, all DNS queries to your domain will run through the CloudFlare servers, not your registrar.

|

||||||

|

|

||||||

|

At this point, you should also add GitHub’s root IP addresses as A records to your domain. This will allow visitors to your domain to access the Pages site. I also added a simple CNAME record pointing the www. version of my site back to the root domain. The GitHub A records are:

|

||||||

|

|

||||||

|

185.199.108.153

|

||||||

|

185.199.109.153

|

||||||

|

185.199.110.153

|

||||||

|

185.199.111.153

|

||||||

|

|

||||||

|

### 2.2 — Configure your domain’s nameservers.

|

||||||

|

|

||||||

|

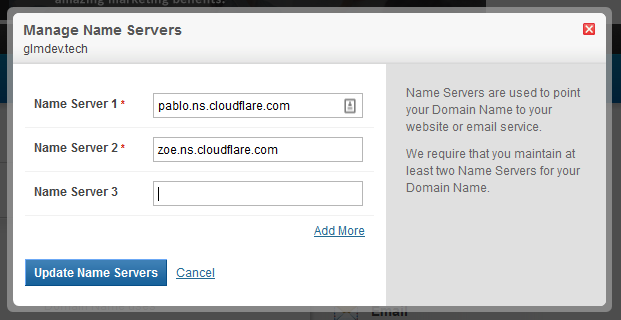

|

||||||

|

|

||||||

|

After you add the DNS records, you’ll be given the addresses of 2 CloudFlare nameservers to point your domain to. These will take the place of your registrar’s default servers.

|

||||||

|

|

||||||

|

Sign in to your registrar and click the settings for your domain’s nameservers. Then, replace the registrar’s default ones with the addresses CloudFlare gave you.

|

||||||

|

|

||||||

|

### 2.2 — Now the hardest part.

|

||||||

|

|

||||||

|

This part is the kicker. Because DNS is decentralized, you may have to wait up to 48 hours for the changes to propagate before you can finish setting up your domain. You can use a [DNS checker](https://dnschecker.org/) to see if your domain’s A records now point to a CloudFlare IP address, but even then it may take several more hours.

|

||||||

|

|

||||||

|

## Step 3: Add the Custom Domain to GitHub Pages

|

||||||

|

|

||||||

|

Once your DNS settings have propagated, you can tell GitHub to use the custom domain instead of the free .github.io one.

|

||||||

|

|

||||||

|

### 3.1 — Create the CNAME file.

|

||||||

|

|

||||||

|

To do this, create a file in the root of your repository called CNAME, then put the custom domain(s) in the file, one per line, like so:

|

||||||

|

|

||||||

|

glmdev.tech

|

||||||

|

www.glmdev.tech

|

||||||

|

|

||||||

|

Commit and push the file, then give GitHub a second to rebuild the site, and then you should be able to load your website at the custom domain.

|

||||||

|

|

||||||

|

### 3.2 — Enable SSL redirection. (optional, but again, not really…)

|

||||||

|

|

||||||

|

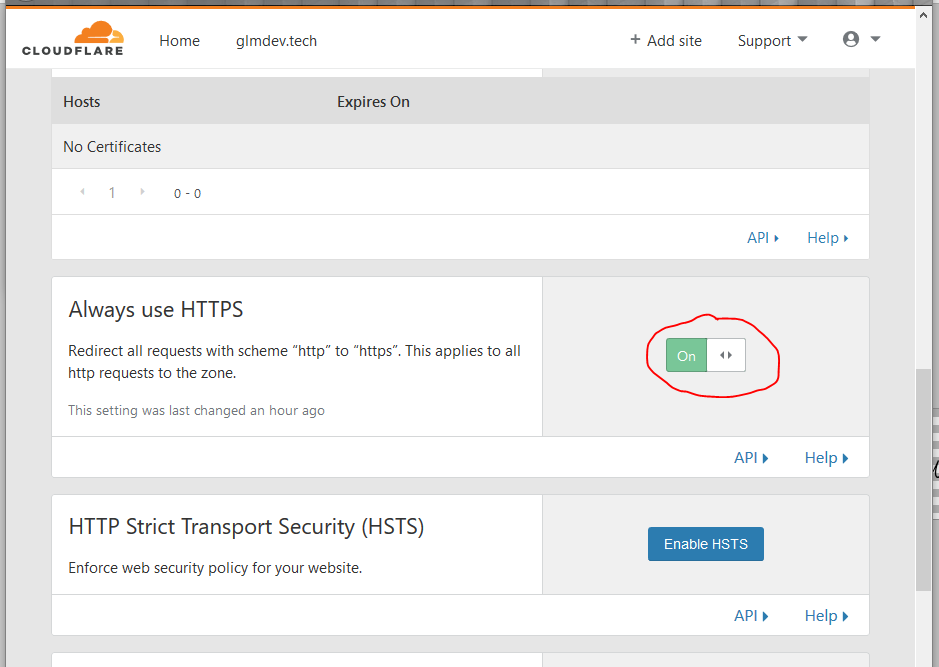

|

||||||

|

|

||||||

|

Lastly, we want non-secure HTTP clients to be automatically redirected to the HTTPS protocol whenever they can. To enable this, go to the CloudFlare dashboard, click the Crypto tab, and turn on “Always Use HTTPS.” While you’re at it, because GitHub also supports SSL, change the SSL mode at the top of the page from “Flexible” to “Full.”

|

||||||

|

|

||||||

|

## Conclusion

|

||||||

|

|

||||||

|

That’s it! Not terribly painful, actually, and we have a kick-ass free (and secure) website up and running.

|

||||||

|

|

||||||

|

A few things of note:

|

||||||

|

|

||||||

|

1. CloudFlare is really powerful. It can do a lot more than I covered in this tutorial, and I’d suggest tinkering around with it to really get the most out of the free service.

|

||||||

|

|

||||||

|

1. When you want to update/change your website, all you need to do is push the changes to the GitHub pages repository, and they’ll automatically be updated on the live site.

|

||||||

|

|

||||||

|

1. Any new DNS records you may add to your domain should be added from within the CloudFlare DNS panel, **not via your registrar.**

|

||||||

|

|

||||||

|

1. Lastly, if you don’t already make it a habit, I suggest going to your GitHub and CloudFlare account settings and enabling 2-Factor Authentication.

|

||||||

444

src/blog/posts/2018-11-15-rpi-part-1.md

Normal file

444

src/blog/posts/2018-11-15-rpi-part-1.md

Normal file

@@ -0,0 +1,444 @@

|

|||||||

|

---

|

||||||

|

layout: blog_post

|

||||||

|

title: Building a Raspberry Pi Cluster - Part I

|

||||||

|

slug: Building-a-Raspberry-Pi-Cluster-Part-I

|

||||||

|

date: 2018-11-15 01:00:00

|

||||||

|

tags: blog

|

||||||

|

permalink: /blog/2018/11/15/Building-a-Raspberry-Pi-Cluster-Part-I/

|

||||||

|

blogtags:

|

||||||

|

- tutorial

|

||||||

|

- raspberry pi

|

||||||

|

- hosting

|

||||||

|

---

|

||||||

|

|

||||||

|

Part I — The Basics

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

*This is Part 1 in my series on building an HPC-style Raspberry Pi cluster. Check out [Part 2](https://medium.com/@glmdev/building-a-raspberry-pi-cluster-aaa8d1f3d2ca?source=---------6------------------) and [Part 3](https://medium.com/@glmdev/building-a-raspberry-pi-cluster-f5f2446702e8?source=---------4------------------).*

|

||||||

|

|

||||||

|

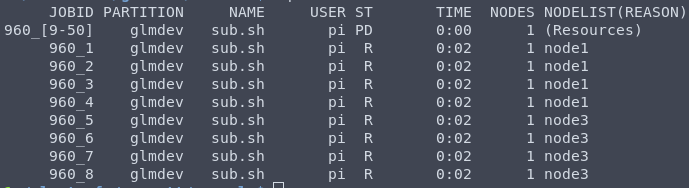

As a new project of mine, I’ve decided to build a miniature community-style cluster using Raspberry Pi computers and HPC-grade software. When I set out to do this, I discovered that instructions on setting this up are actually surprisingly sparse. So, I decided to document my progress in a series of guides. In this Part I, we will look at the basics of setting up the hardware and getting a cluster scheduler running.

|

||||||

|

|

||||||

|

## Step 0: Get The Hardware

|

||||||

|

|

||||||

|

### Parts list

|

||||||

|

|

||||||

|

* 3x Raspberry Pi 3 Model B — for the compute nodes

|

||||||

|

|

||||||

|

* 1x Raspberry Pi 3 Model B — for the master/login node

|

||||||

|

|

||||||

|

* 4x MicroSD Cards

|

||||||

|

|

||||||

|

* 4x micro-USB power cables

|

||||||

|

|

||||||

|

* 1x 8-port 10/100/1000 network switch

|

||||||

|

|

||||||

|

* 1x 6-port USB power-supply

|

||||||

|

|

||||||

|

* 1x 64GB USB Drive (or NAS, see below)

|

||||||

|

|

||||||

|

This project is scalable to as many RPis (and even non-RPi computers) of different kinds as you want, but for my cluster, I used 3 Pis with MicroSD cards of varying capacities connected to my network through an 8-port gigabit switch.

|

||||||

|

|

||||||

|

Let’s talk storage. Cluster computing requires a storage location that is shared across all of the different nodes so they can work on the same files as the jobs are farmed out. For a basic setup, you can use a 64GB USB drive plugged in to one of the Pis as shared storage (or a larger Micro SD card in one of them). However, as an alternative, if you have some kind of network-attached storage that you can export as an NFS share, this can also be used as the shared drive.

|

||||||

|

|

||||||

|

For example, I have about 750GB of btrfs RAID storage on a Linux box on my network. Instead of using a 64GB flash drive, I just exported an NFS share from this and mounted it on all the nodes. (Don’t worry, we’ll cover both.)

|

||||||

|

|

||||||

|

## Step 1: Flash the Raspberry Pis

|

||||||

|

|

||||||

|

The first step is to get our Pis up and running. Start by downloading the latest version of Raspbian, the Debian distribution that runs on the Pis. Download the command-line only “lite” version to save space: [Download Here](https://www.raspberrypi.org/downloads/raspbian/).

|

||||||

|

|

||||||

|

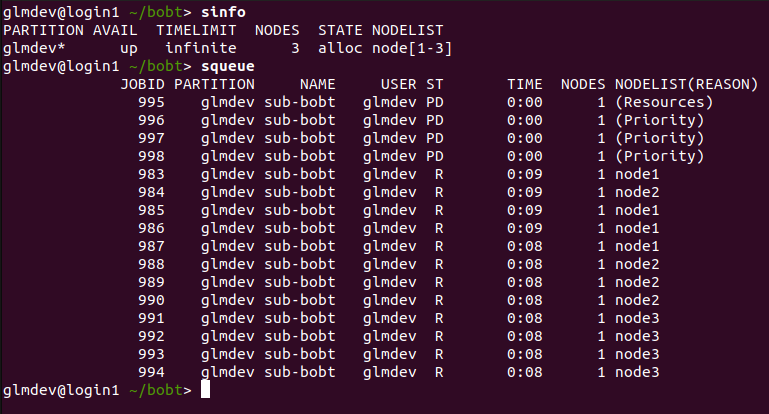

Now, download and install Etcher. Etcher is a simple program that we will use to write the downloaded Raspbian image to our SD cards. [Get Etcher here.](https://etcher.io/)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Fire up Etcher and plug in your MicroSD card (use an adapter).

|

||||||

|

|

||||||

|

Select the Raspbian ZIP file and hit flash.

|

||||||

|

|

||||||

|

Before we finish with the SD card, we want to enable SSH remote access from our Pi. To do this, open the “boot” drive on the SD card and create an empty file named ssh with no extension. On Windows, you can do this by opening Notepad and saving the blank file with no extension. On macOS and Linux, open the folder in a shell and run:

|

||||||

|

|

||||||

|

glmdev@polaris ~> touch ssh

|

||||||

|

|

||||||

|

Wash, rinse, and repeat this for the other 3 MicroSD cards. Once you’ve done this, plug the SD cards into the 4 nodes.

|

||||||

|

|

||||||

|

## Step 2: Network Setup

|

||||||

|

|

||||||

|

We want to make sure that our nodes have IP addresses that never change. This way, we can be sure that they can always talk to each other, which is critical for the cluster jobs.

|

||||||

|

|

||||||

|

I recommend setting the Pis up one at a time so you can keep track of which physical node is has what IP. This makes maintenance easier.

|

||||||

|

|

||||||

|

Start by plugging the switch into your network. Then, connect one Pi to the switch and plug it in. Wait a second for it to boot up.

|

||||||

|

|

||||||

|

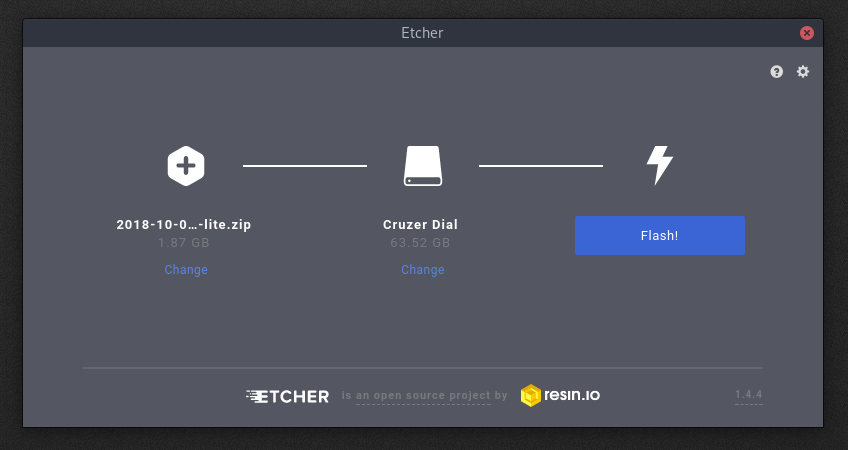

Once the Pi has booted, we can SSH into it to finish setting it up. To find the IP address of the Pi, either use your router’s interface to view attached devices. If you can’t do this, use a program like nmap to scan IP addresses on your network. Either way, look for a device named RASPBERRYPI and make a note of its IP address.

|

||||||

|

|

||||||

|

*Find the RASPBERRYPI device’s IP address.*

|

||||||

|

|

||||||

|

SSH into the Pi using:

|

||||||

|

|

||||||

|

ssh pi@ip.addr.goes.here

|

||||||

|

|

||||||

|

(default password: raspberry)

|

||||||

|

|

||||||

|

## Step 3: Set Up the Raspberry Pis

|

||||||

|

|

||||||

|

### 3.1: raspi-config

|

||||||

|

|

||||||

|

Now we can start setting up the Pi. First, get some basic config out of the way:

|

||||||

|

|

||||||

|

pi@raspberrypi~$ sudo raspi-config

|

||||||

|

|

||||||

|

This will open the config utility. Change the default password, set the locale and timezone, and expand the filesystem. Then, exit the utility.

|

||||||

|

|

||||||

|

### 3.2: setting the hostname

|

||||||

|

> **A word about hostnames:**

|

||||||

|

> SLURM, the cluster scheduler we will be using, expects hosts to be named following a specific pattern: *<whatever text here><a predictable number>*. So, when choosing hostnames for your RPis, it’s helpful to pick a system and number them in order (eg node01, node02, node03, node04, etc…).

|

||||||

|

|

||||||

|

Now, set the hostname:

|

||||||

|

|

||||||

|

sudo hostname node01 # whatever name you chose

|

||||||

|

sudo nano /etc/hostname # change the hostname here too

|

||||||

|

sudo nano /etc/hosts # change "raspberrypi" to "node01"

|

||||||

|

|

||||||

|

We use the systemd hostname command, edit the hostname file, and update the hosts file so local resolution works.

|

||||||

|

|

||||||

|

### 3.3: make sure the system time is right

|

||||||

|

|

||||||

|

The SLURM scheduler and the Munge authentication that it uses requires accurate system time. We’ll install the ntpdate package to periodically sync the system time in the background.

|

||||||

|

|

||||||

|

sudo apt install ntpdate -y

|

||||||

|

|

||||||

|

### 3.4: reboot

|

||||||

|

|

||||||

|

sudo reboot

|

||||||

|

|

||||||

|

***Repeat this process on all of the RPis.***

|

||||||

|

|

||||||

|

## Step 4: Shared Storage

|

||||||

|

> **A word on shared storage:**

|

||||||

|

> In order for a cluster to work well, a job should be able to be run on any of the nodes in the cluster. This means that each node needs to be able to access the same files. We will accomplish this by connecting a 64GB USB drive connected to the master node (more on that below) and exporting that drive as a network file system (NFS). Then, we can mount that NFS share on all the nodes so they can all share access to it.

|

||||||

|

> **Side note:**

|

||||||

|

> If, like me, you have a separate NAS box on your network that you would rather use as shared storage, you can export an NFS share from that box. Then, just mount that share on the nodes using the instructions below.

|

||||||

|

|

||||||

|

### 4.0: Login to the Master Node

|

||||||

|

|

||||||

|

We will discuss the master node more later, but one of our nodes will be the controller. Just pick one. :) In my cluster, the master is node01.

|

||||||

|

|

||||||

|

ssh pi@<ip addr of node01>

|

||||||

|

|

||||||

|

### 4.1: Connect & Mount Flash Drive

|

||||||

|

|

||||||

|

**4.1.1: Find the drive identifier.

|

||||||

|

**Plug the flash drive into one of the USB ports on the master node. Then, figure out its dev location by examining the output of lsblk:

|

||||||

|

|

||||||

|

glmdev@node01 ~> lsblk

|

||||||

|

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

|

||||||

|

mmcblk0 179:0 0 7.4G 0 disk

|

||||||

|

├─mmcblk0p1 179:1 0 43.8M 0 part /boot

|

||||||

|

└─mmcblk0p2 179:2 0 7.4G 0 part /

|

||||||

|

sda 8:16 0 59.2G 0 disk

|

||||||

|

└─sda1 8:17 0 59.2G 0 part

|

||||||

|

|

||||||

|

In this case, the main partition of the flash drive is at /dev/sda1.

|

||||||

|

|

||||||

|

**4.1.2: Format the drive.

|

||||||

|

**We’re first going to format the flash drive to use the ext4 filesystem:

|

||||||

|

|

||||||

|

sudo mkfs.ext4 /dev/sda1

|

||||||

|

> **A word of warning: **double check to be sure you’re not about to overwrite your root */* directory on accident.

|

||||||

|

|

||||||

|

**4.1.3: Create the mount directory.**

|

||||||

|

(Note that this should be the same across all the nodes.) In my cluster, I used /clusterfs:

|

||||||

|

|

||||||

|

sudo mkdir /clusterfs

|

||||||

|

sudo chown nobody.nogroup -R /clusterfs

|

||||||

|

sudo chmod 777 -R /clusterfs

|

||||||

|

|

||||||

|

**4.1.4: Setup automatic mounting.

|

||||||

|